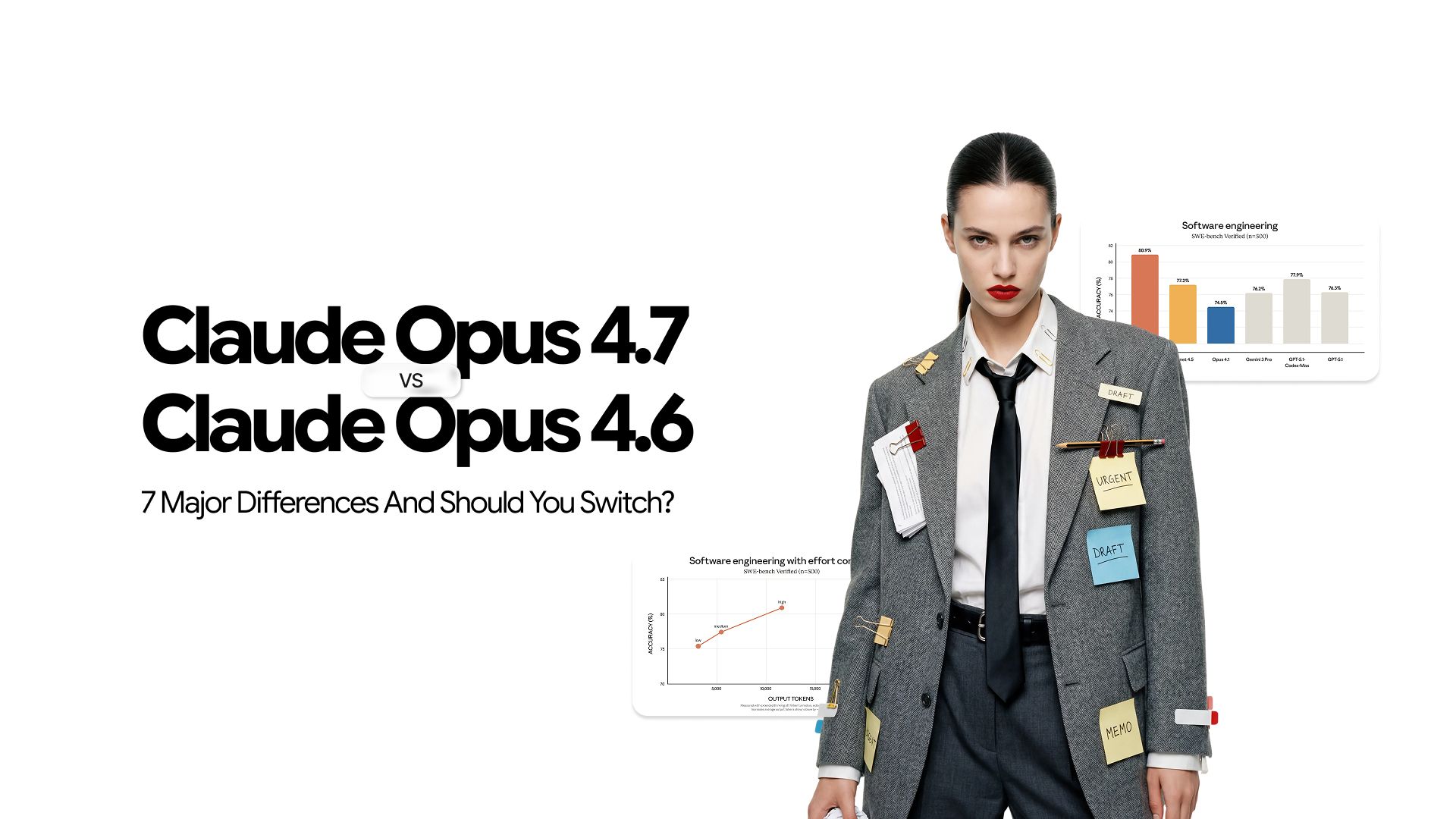

Claude Opus 4.7 vs Opus 4.6: Should You Switch?

Anthropic released Claude Opus 4.7 on April 16, 2026, two months after Opus 4.6 launched. Same model tier, same price point, not a new model family.

Opus 4.7 wins 12 of 14 benchmarks against Opus 4.6 at identical pricing. That sounds straightforward until you factor in the breaking API changes, a new tokenizer, and instruction-following behavior that will produce different results from prompts you did not touch.

The full breakdown of what Opus 4.7 brings covers the model in depth.

This article is the direct head-to-head: what actually changed, what it means in practice, and whether the switch is worth it for your situation.

Quick Verdict

Upgrade now if you run production coding agents, use Claude for computer use or vision-heavy workflows, or are building multi-session agents that need persistent memory across runs.

Upgrade after prompt review if your pipelines use temperature, top_p, or top_k, or your prompts rely on loose, interpretive language that Opus 4.6 handled flexibly.

Stay on Opus 4.6 temporarily if web research and BrowseComp-style tasks are your primary use case (this is the one area where Opus 4.6 outperforms 4.7) or if you have a compliance-approved 4.6 deployment that has not been validated against 4.7's new cybersecurity safeguards.

7 Major Differences Between Claude Opus 4.7 and Opus 4.6

Here is where the gap is real and where it matters in production.

1. Coding Performance

This is the headline gap, and the benchmarks make it concrete.

Official scores vs. Opus 4.6:

- SWE-bench Verified: 87.6% vs. 80.8%

- SWE-bench Pro: 64.3% vs. 53.4%, ahead of GPT-5.4 at 57.7% and Gemini 3.1 Pro at 54.2%

- CursorBench: 70% vs. 58%

- Terminal-Bench 2.0: 69.4% vs. 65.4% — an improvement, though GPT-5.4 still leads at 75.1% on terminal and command-line work

The real-world results reinforce the benchmark story. On Rakuten-SWE-Bench, Opus 4.7 resolves 3x more production tasks than Opus 4.6. On a 93-task internal coding benchmark, it delivered a 13% resolution lift, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve.

The gains are concentrated in specific areas where Opus 4.6 had real production limitations:

- Multi-file edits: Opus 4.7 handles changes spanning multiple files more coherently. Opus 4.6 sometimes lost track of context across files in long sessions.

- Test generation: When asked to write tests alongside implementation code, Opus 4.7 produces tests that are more likely to catch the edge cases they're meant to catch.

- Debugging with incomplete information: Given a stack trace and limited context, Opus 4.7 is better at identifying root causes rather than guessing at surface-level symptoms.

The behavioral shift underneath these numbers is what matters most operationally. Opus 4.7 catches its own logical faults during the planning phase and verifies outputs before reporting back. Opus 4.6 did not do this reliably. Independent testing from enterprise engineering teams confirms the production coding quality gap is real and consistent.

Verdict: If coding agents, code review, or multi-file engineering work is your primary use case, the upgrade is worth it on this difference alone.

2. Vision Resolution

Opus 4.6 accepted images up to 1,568px at 1.15 megapixels. Opus 4.7 accepts images up to 2,576px at 3.75 megapixels. That is more than three times the pixel count.

Beyond resolution, two technical changes matter for anyone doing computer use:

- Coordinate mapping: Opus 4.6 coordinates did not map 1:1 with actual pixels, causing missed clicks and misread layouts. Opus 4.7 coordinates are 1:1 with actual pixels, removing the scale-factor math entirely.

- Visual acuity: XBOW saw this jump from 54.5% on Opus 4.6 to 98.5% on Opus 4.7. Their single biggest Opus pain point, as they described it, effectively disappeared.

On OSWorld-Verified, which tests computer use in a live operating system, Opus 4.7 scores 78.0%, up from 72.7%.

Verdict: For anyone using Claude for computer use, screenshot reading, diagram analysis, or document processing, Opus 4.6 and Opus 4.7 are functionally different models on vision tasks.

3. Instruction Following

Opus 4.6 interpreted instructions loosely. It filled in gaps, generalized from one item to another, and applied reasonable inference to ambiguous requests. For many users, this felt helpful.

Opus 4.7 takes instructions literally. What you write is what it does. It will not silently generalize an instruction from one item to another, and it will not infer requests you did not explicitly make.

This is a genuine strength for standardized enterprise workflows where predictability and auditability matter. It is a migration risk for any team whose prompts were written with 4.6's interpretive behavior in mind. Prompts using soft language like "consider" or "you might," or bullet lists framed as suggestions rather than requirements, will behave differently on 4.7 without any code changes.

Verdict: Teams with tightly written, purpose-built prompts benefit immediately. Teams with looser, conversational prompts need to audit before switching.

4. Agentic Reliability and Tool Use

Opus 4.6 had noted loop issues on some production deployments. Agents would stall, loop indefinitely on edge cases, or stop cold when tool calls failed.

Opus 4.7 addresses this directly. It pushes through tool failures that stopped Opus 4.6, executes through ambiguous states rather than stopping for clarification, and is the first Claude model to pass implicit-need tests.

On MCP-Atlas, the closest thing to a real production agent benchmark, Opus 4.7 scores 77.3% against Opus 4.6's 75.8%. On complex multi-step workflows, it delivered a 14% lift over Opus 4.6 at fewer tokens and a third of the tool errors.

For context on where both models sit against the broader competitive landscape, the frontier model comparison covers how Claude stacks up against GPT and Gemini on agentic benchmarks in detail.

Verdict: For long-running agents in production, the reliability improvements reduce the maintenance overhead that makes complex agentic workflows expensive to operate.

5. Memory Across Sessions

Opus 4.6 did not reliably persist or reuse notes across multi-session work. Agents running tasks over multiple sessions effectively started fresh each time, requiring context to be re-established at the beginning of every run.

Opus 4.7 writes to and reads from file system-based memory more reliably. When an agent maintains a scratchpad or notes file between sessions, Opus 4.7 uses those notes to reduce cold-start context overhead on subsequent tasks. The research-agent benchmark results reflect this directly:

- General Finance module (the largest of six): 0.813 vs. 0.767 on Opus 4.6

- Overall consistency: Top-ranked across all six modules for sustained long-context performance

- Deductive logic: Solid results in an area where Opus 4.6 had notable gaps

Verdict: For multi-day engineering tasks or long-horizon research workflows that span multiple sessions, this removes a friction point Opus 4.6 never fully solved.

6. New Controls – xhigh Effort and Task Budgets

Neither of these existed in Opus 4.6.

The xhigh effort level sits between high and max, giving developers finer control over the reasoning-cost tradeoff. Claude Code now defaults to xhigh for all plans. For most coding and agentic use cases, xhigh provides more thinking depth than high without the full token cost of max.

Task budgets, currently in public beta, let you set an advisory token cap across an entire agentic loop rather than per request. Opus 4.6 had no equivalent. Without a task budget, a long-running agent can consume significantly more tokens than the task required. With one, the model self-moderates toward completion within the budget.

The practical cost implication: early-access testing found that low-effort Opus 4.7 matches medium-effort Opus 4.6 on quality. The same completed work uses fewer tokens at a cheaper effort tier, which partially offsets the new tokenizer's higher per-token count.

Verdict: These controls make Opus 4.7 more manageable at scale than Opus 4.6 was, particularly for teams running high-volume or long-running agentic workloads.

7. Breaking API Changes

This is the one difference that requires deliberate migration work rather than a model ID swap.

Three things that worked in Opus 4.6 return errors in Opus 4.7:

- Extended thinking budgets removed. Setting

thinking: {"type": "enabled", "budget_tokens": N}returns a 400 error. Adaptive thinking is now the only supported thinking-on mode and must be explicitly enabled withthinking: {"type": "adaptive"}. - Sampling parameters removed. Setting

temperature,top_p, ortop_kto any non-default value returns a 400 error. Any pipeline using these parameters for determinism or creativity control needs code changes before switching. - Thinking content omitted by default. Thinking blocks appear in the response stream but the

thinkingfield is empty unless you explicitly setdisplay: "summarized". Products that streamed reasoning to users will show a blank until this is configured.

The new tokenizer compounds the migration consideration. The same input can map to 1.0 to 1.35x more tokens on Opus 4.7 than on Opus 4.6, varying by content type. Static token budgets built around 4.6 need re-measuring.

Verdict: None of these are optional. Every team migrating from Opus 4.6 needs to audit for these three changes before flipping the model flag in production.

Pricing

Per-token pricing is identical across both models: $5 per million input tokens and $25 per million output tokens, available across the Anthropic API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

The cost story lives at the task level, not the token level. To make the tokenizer impact concrete: a team running 1,000 coding tasks per month on Opus 4.6 at an average of 10,000 tokens per task spends $50 on input. On Opus 4.7 at a 1.25x tokenizer multiplier, that same traffic costs $62.50, which is a 25% increase before any efficiency gains are factored in. For teams at higher volume, that gap compounds quickly.

The offset is real but requires deliberate configuration. Low-effort Opus 4.7 matches medium-effort Opus 4.6 on quality, meaning teams that drop their effort setting by one tier can recover a meaningful portion of the tokenizer increase. Task budgets cap agentic loop spend in a way that had no equivalent on 4.6.

The net effect varies by workload. Measure on your specific traffic before assuming a fixed cost increase. The tokenizer impact is notably larger for multilingual and structured content than for standard English prose.

Should You Switch?

The honest answer is: it depends on what you are running and how your prompts are written. The benchmark wins are real, but the breaking changes mean the upgrade is not risk-free without preparation. Here is how to think about your specific situation.

Switch now if:

- You run production coding agents, automated code review, or multi-file engineering workflows

- You use Claude for computer use, screenshot analysis, or any vision-heavy workflow

- Your prompts are tightly written with explicit instructions and defined workflows

- You are building multi-session agents that depend on persistent memory across runs

Switch after prompt review if:

- Your prompts use soft, interpretive language that Opus 4.6 was flexible about

- Any pipeline in your stack uses

temperature,top_p, ortop_k - You built around extended thinking budgets and need time to migrate to adaptive thinking

Stick with Opus 4.6 temporarily if:

- Web research and BrowseComp-style tasks are your primary use case. Opus 4.6 scores 84.0% vs 4.7's 79.3%, a real regression worth factoring in.

- You have a compliance-approved Opus 4.6 deployment that has not been validated against Opus 4.7's new cybersecurity safeguards under Project Glasswing. The automated detection and blocking behavior is new and may produce unexpected refusals in workflows that passed on 4.6.

- You are mid-deployment and cannot absorb a prompt audit right now.

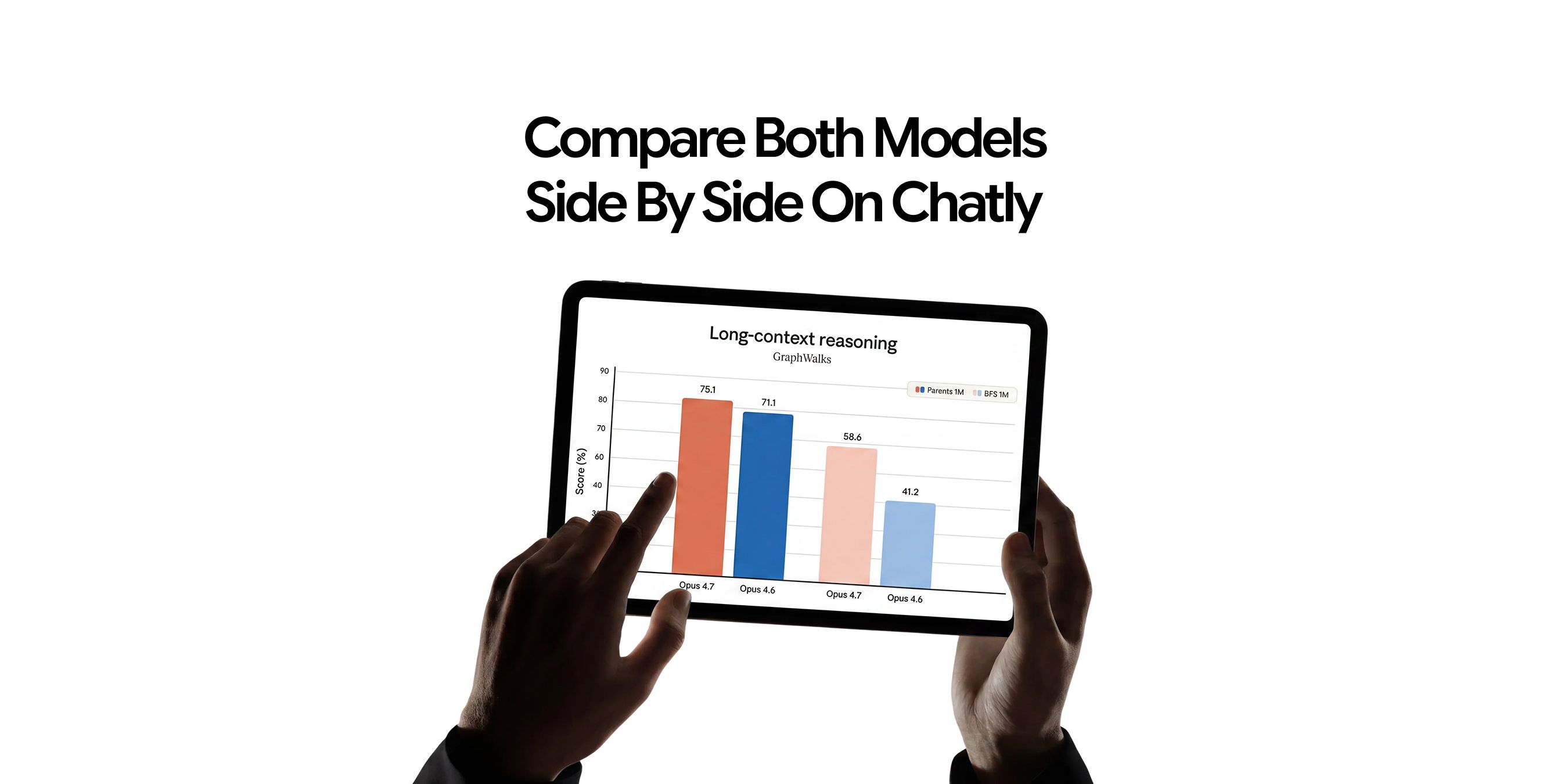

The lowest-friction way to test Opus 4.7 against your actual workflows before committing to a full API migration is through Chatly, where both models are accessible without any API configuration. If you want to try the model without API setup first, free access options are also available.

Start Using Claude Opus 4.5 Today!

Opus 4.7 is a better model than Opus 4.6 on almost every benchmark that matters for production use, at the same price. The coding gains are real, the vision upgrade is significant, and the agentic reliability improvements reduce the maintenance overhead that makes autonomous workflows expensive to run at scale.

The upgrade is not frictionless. The breaking API changes are real, the new tokenizer affects cost calculations, and literal instruction following will produce different results from prompts you did not deliberately change. Plan for that work before flipping the flag.

For teams doing complex coding, agentic work, or vision-heavy workflows, the answer is yes.

Frequently Asked Question

Still need more information? Find answers to internet's most burning questions.

More topics you may like

11 Best ChatGPT Alternatives in 2026 (Tested, Compared & Priced)

Muhammad Bin Habib

Claude Opus 4.5: The Definitive Guide to Features, Use Cases, Pricing

Faisal Saeed

Claude Haiku 4.5 vs Claude Sonnet 4.5: The Ultimate Comparison Guide

Faisal Saeed

Cost Efficiency in Claude Opus 4.5: Understanding Tokens, Effort Levels & When It’s Worth It

Faisal Saeed

9 Best AI Tools for Marketers in 2026

Umaima Shah