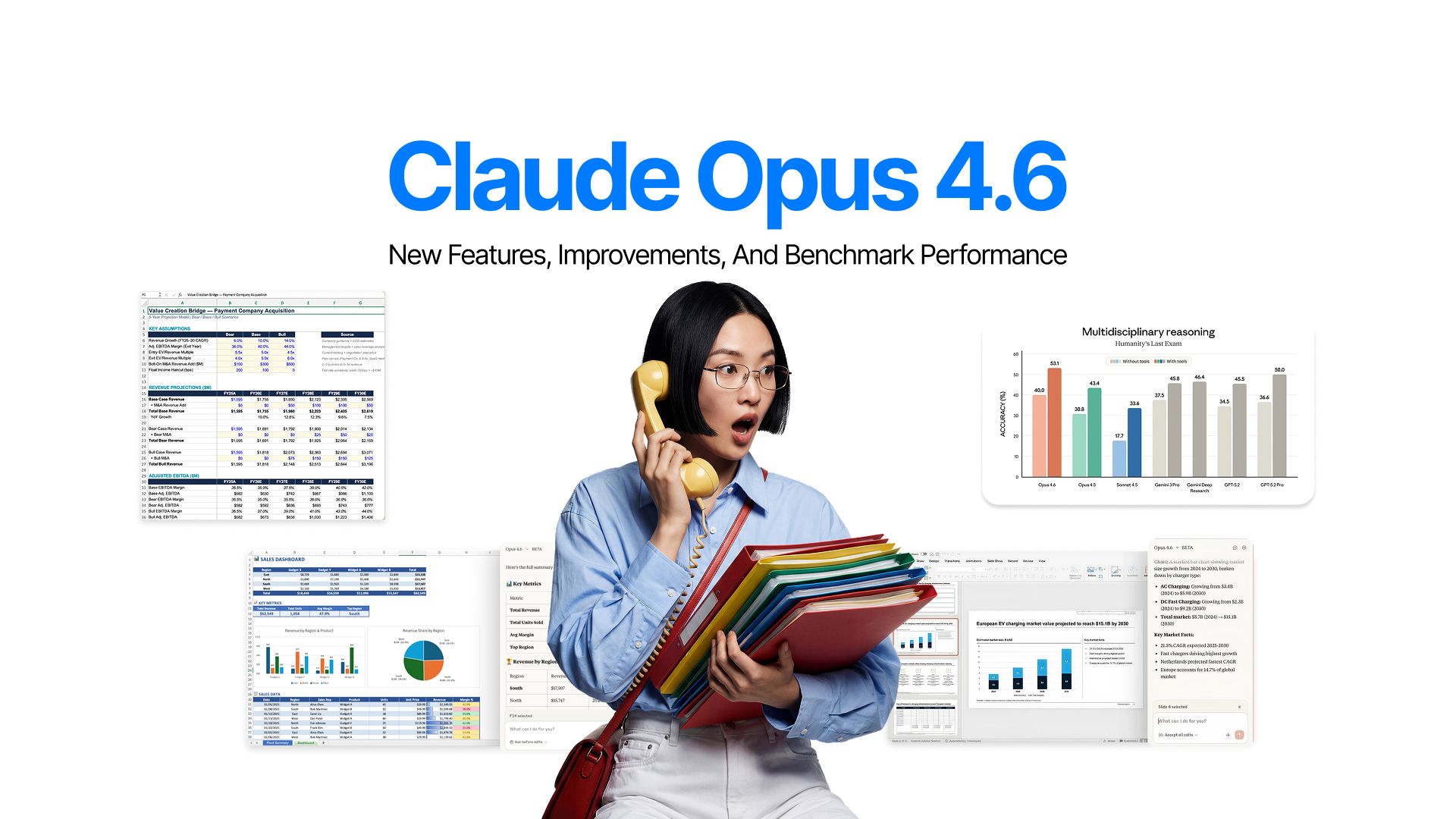

Claude Opus 4.6: New Features, Improvements, and Benchmark Performance

A few days ago, we published a blog about the expected release of GPT-5.3 from OpenAI and all the rumored features and improvements that are being discussed online.

Apart from GPT-5.3, people were also expecting news about Sonnet 5 “Fennec” and even newer Grok models. But there was little to no discussion about any potential Opus upgrade, since it came out just a couple of months ago on November 24, 2025.

However, that did not stop Anthropic from releasing a surprise upgrade.

On February 5, 2026, Anthropic released Claude Opus 4.6. And as it usually goes on Reddit, people went crazy and got on their computers to test the model..

What makes this release noteworthy isn't just the benchmark scores. According to Anthropic, strong code on this model is able to review its own work and catch every minor mistake and fix it before providing the final results. That’s impressive.

Early testers report Claude reviewing its own code with the critical eye of a senior engineer.

We will leave the testing and judgement to you. But let us tell you about every new feature and improvement Opus 4.6 boasts.

What's New in Claude Opus 4.6

The biggest improvement in Opus 4.6 is something that people have come to expect of Claude models: strong coding capabilities. But this time, it will be even easier for you. You do not need to review its code over and over because Opus 4.6 will do that on its own.

The model introduces adaptive thinking mode, replacing the binary on-off toggle. Claude can now dynamically decide when and how deeply to think about a problem, with four effort levels from "low" to "max."

Context handling received a massive upgrade:

- Opus 4.6 is the first Opus-class model with a 1 million token context window in beta.

- Combined with the new 128K output token limit (double the previous 64K), it can consume entire large codebases without hitting limits.

- Context compaction enables effectively infinite conversations by automatically summarizing earlier parts when approaching the window limit.

Technical Specifications and Features

The engineering details reveal why Opus 4.6 represents such a leap forward in practical AI capabilities, particularly for developers and knowledge workers tackling complex, multi-step problems.

1. Core Specifications

The model supports up to 128K output tokens. Doubling previous capacity and enabling longer thinking budgets without chaining multiple requests. Knowledge cutoff is January 2025.

2. API and Platform Features

Adaptive thinking evaluates each problem and decides how much cognitive effort to apply. Instead of binary "thinking on/off," Opus 4.6 applies contextual intelligence.

The effort parameter offers four levels:

- Low

- Medium

- High (default)

- Max

This fine-grained control lets developers optimize the intelligence-speed-cost tradeoff. If Opus 4.6 overthinks straightforward tasks, dialing down to medium reduces latency and cost.

Context compaction solves hitting the context window mid-conversation. When approaching the threshold, the API automatically summarizes earlier parts while preserving critical information.

Data residency controls let enterprises specify inference location via inference_geo parameter ("global" or "us"). US-only inference costs 1.1× standard pricing for data sovereignty compliance.

3. Breaking Changes and Deprecations

Prefilling assistant messages is no longer supported as the requests return 400 errors. Use structured outputs, system prompts, or output_config.format instead.

The thinking: {type: "enabled", budget_tokens: N} syntax is deprecated. Migrate to thinking: {type: "adaptive"} with the effort parameter for future compatibility.

Benchmarks and Performance Analysis

Benchmark results reveal Opus 4.6's capabilities across coding, reasoning, and knowledge work. And to think we still have Sonnet 5 to come. Impressive, right?

1. Industry-Leading Results

There are major improvements across the board. You name the category, Opus 4.6 outperforms its rivals in most of the,.

a. Terminal-Bench 2.0

- Opus 4.6: 65.4%

- Gemini 3 Pro: 56.2%

- GPT-5.2: 64.7%

This benchmark tests agentic coding in realistic system environments with multi-step autonomous tasks.

b. GDPval-AA

It measures economically valuable knowledge work across finance, legal, and professional domains.

- Opus 4.6: 1606

- Gemini 3 Pro: 1195

- GPT-5.2: 1462

c. Humanity's Last Exam (with tool use)

This benchmark tests expert-level reasoning across multiple disciplines. Opus 4.6 leads all frontier models, demonstrating reasoning improvements extend beyond coding to any complex analytical task.

- Opus 4.6: 53.1%

- Gemini 3 Pro: 45.8%

- GPT-5.2: 50.0%

d. SWE-bench Verified

This showed another area of Opus 4.6 dominance. BrowseComp proved Opus 4.6 best at locating hard-to-find online information which is critical for research and synthesis.

- Opus 4.6: 80.8% (Opus 4.5 still dominates with 80.9%)

- Gemini 3 Pro: 76.2%

- GPT-5.2: 80.0%

2. Long-Context Performance

MRCR v2 (8-needle, 1M tokens) scored Opus 4.6 at 76% versus Sonnet 4.5's 18.5%. This is a fundamental capability shift in handling buried information.

"Context rot" (performance degradation in long conversations) was a major problem in previous AI assistants. Opus 4.6's architecture directly addresses this, maintaining peak performance across vastly more context with fewer hallucinations and better information retrieval.

3. Specialized Domain Performance

Life sciences tests (computational biology, structural biology, organic chemistry, phylogenetics) showed nearly 2× improvement over Opus 4.5.

BigLaw Bench scored 90.2% with 40% perfect scores and 84% above 0.8 quality. Legal reasoning demands precision and detail synthesis which is exactly where Opus 4.6's improvements shine.

Cybersecurity evaluations proved the model finds real vulnerabilities in actual codebases. Anthropic discovered 500+ zero-day vulnerabilities in open-source software and responsibly disclosed them.

Claude Opus 4.6 vs Claude Opus 4.5 Comparison

Comparing Opus 4.6 against its predecessor clarifies when upgrading makes sense and what concrete improvements you'll see.

1. Performance Improvements

Coding quality shows the most dramatic improvement.

a. Terminal-Bench 2.0

- Opus 4.6: 65.4%

- Opus 4.5: 59.8%

b. SWE-bench Verified

- Opus 4.6: 80.8%

- Opus 4.5: 80.9%

Opus 4.6 plans better upfront, breaking problems into logical steps before implementation. When mistakes happen, it identifies and corrects them with minimal human intervention.

Context handling improved 4× on long-context retrieval. This means maintaining awareness of architectural patterns, naming conventions, and dependencies across tens of thousands of lines without losing critical details.

Financial analysis tasks showed 5% improvements. For professionals using Claude for market data or financial insights, this represents the difference between useful assistance and reliable analysis.

Agentic tasks maintained focus through hundreds of tool calls and multi-hour sessions where Opus 4.5 would drift or lose track of goals.

2. New Capabilities

The 1 million token context window fundamentally changes what's possible. Upload entire documentation sets, massive datasets, or comprehensive research libraries for holistic analysis rather than chunking.

Standard Claude Opus 4.5 offered 200K tokens.

Output tokens doubled from 64K to 128K, removing frustrating mid-response boundaries. Combined with higher-quality thinking, Opus 4.6 tackles more ambitious single-turn tasks.

Agent Teams in Claude Code enables multiple specialized agents working in parallel. Early access users reported 16-agent teams building C compilers. This requires careful coordination across lexing, parsing, semantic analysis, and code generation.

Real-World Applications and Use Cases

Benchmark improvements translate into concrete capabilities already transforming how developers and knowledge workers tackle challenging tasks.

1. Coding and Development

Early access partners report giving Opus 4.6 task sequences across their entire stack and letting it run autonomously. Something impossible with previous models.

Improved judgment means you do not have to micromanage every response..

SentinelOne's Chief AI Officer reported the model handled multi-million-line migrations "like a senior engineer."

Multi-step debugging showcases self-correction.

Rather than hoping fixes work, Opus 4.6 reviews its changes, identifies potential issues, and refines solutions before presenting them.

2. Enterprise Knowledge Work

Financial analysis requires synthesizing multi-source information and drawing defensible conclusions.

Box's Head of AI reported 10% performance lifts reaching 68% versus 58% baseline. All this comes with near-perfect technical domain scores.

Legal document analysis benefits from improved long-context reasoning. The model holds precedents, contractual clauses, and regulatory requirements in context while analyzing new documents.

Thomson Reuters emphasized "meaningful leaps in long-context performance," noting consistency improvements strengthen expert-grade systems professionals can trust. For knowledge work at scale, consistency matters as much as peak capability.

3. Cybersecurity Applications

NBIM security researchers ran 40 investigations comparing Opus 4.6 against Claude 4.5 models in blind rankings. Opus 4.6 won 38 out of 40 times—95% success rate. Each investigation ran end-to-end with up to 9 subagents and 100+ tool calls.

Zero-day vulnerability discovery demonstrates both technical skill and responsible deployment. Using Opus 4.6 to find and disclose vulnerabilities in open-source software helps level the playing field between attackers and defenders.

Product Ecosystem Updates

Anthropic built an ecosystem of tools and integrations letting Opus 4.6's capabilities shine across different contexts.

1. Claude Code Enhancements

Agent Teams orchestrates multiple agents working in parallel.

Rather than linear single-agent work, independent subtasks run simultaneously (one reviewing documentation, another implementing features, a third writing tests) while maintaining overall coherence.

Background execution lets you start complex coding tasks and switch contexts without interruption. This "set and forget" capability proves crucial for multi-hour tasks with coordinating agents.

2. Office Integration

Claude in Excel received substantial upgrades for complex spreadsheet tasks. The model ingests unstructured data and infers correct structure without explicit guidance, plans before acting, and handles multi-step transformations previous versions would break apart.

Claude in PowerPoint launched in a research preview for presentation creation. The model reads layouts, fonts, and slide masters to maintain brand consistency. It makes it simple to build from templates or generate full decks from descriptions.

Combined with Excel, you can process data then visualize it coherently.

Getting Started with Claude Opus 4.6

Understanding access options and best practices helps extract maximum value from Opus 4.6's capabilities.

1. Access Options

Consumer users access Opus 4.6 through claude.ai on Pro ($20/month), Max ($40/month), Team, or Enterprise plans. The web interface provides full features including adaptive thinking, though without all granular API controls.

API developers use model ID claude-opus-4-6 through Anthropic's API, AWS Bedrock, Google Cloud Vertex AI, or Microsoft Foundry. This provides complete control over thinking modes, effort levels, context compaction, and advanced features at $5/$25 per million input/output tokens.

2. Best Practices

Choose Opus 4.6 when task complexity, accuracy requirements, or context length demand the highest capability. For straightforward tasks, Sonnet 4.5 or Haiku 4.5 offer better speed and cost efficiency.

Start with the default "high" effort, then dial down to "medium" or "low" if overthinking occurs on simpler tasks. Reserve "max" for genuinely difficult problems needing peak performance regardless of cost or latency.

Use thinking: {type: "adaptive"} by default—letting the model decide when deep reasoning helps. This eliminates binary thinking toggle guesswork while delivering better cross-task results.

Set context compaction thresholds based on typical conversation length. 50K tokens works well for most applications, preserving critical information while clearing space for continued progress.

Conclusion

Claude Opus 4.6 represents genuine advancement in AI capabilities, particularly for coding, knowledge work, and long-context reasoning.

For developers building agentic systems, researchers working with complex information, and knowledge workers tackling analytical tasks, Opus 4.6 delivers meaningful improvements. Enhanced self-correction, better long-context handling, and improved judgment make it genuinely more trustworthy for autonomous work.

Mixed community reception highlights important AI development tensions. Optimizing for every use case simultaneously may be impossible. Technical reasoning improvements might trade off with creative writing style, at least short-term.

Try Claude Opus 4.6 through the API or on Claude to experience improvements firsthand. The best way to understand how it compares is hands-on experimentation with your specific workflows.

Frequently Asked Question

Dive deeper into Claude Opus 4.6's extraordinary capabilities and what people have to say about them.

More topics you may like

Claude Opus 4.5: The Definitive Guide to Features, Use Cases, Pricing

Faisal Saeed

Claude Haiku 4.5 vs Claude Sonnet 4.5: The Ultimate Comparison Guide

Faisal Saeed

Cost Efficiency in Claude Opus 4.5: Understanding Tokens, Effort Levels & When It’s Worth It

Faisal Saeed

Gemini 3 Flash vs Gemini 3 Pro: Key Performance Differences

Faisal Saeed

Gemini 3 Pro Overview: Features, Pricing, and Use Cases

Faisal Saeed