GPT-5.4: OpenAI's First Truly Unified Frontier Model

For the past year, using OpenAI's models well meant knowing which one to reach for. GPT-5.2 for writing and reasoning. GPT-5.3 Codex for code. Both were excellent at their jobs, and neither was built to do both at once.

That tradeoff is now gone.

GPT-5.4, released March 5, 2026, merges the coding precision of Codex with the reasoning depth of 5.2, wraps it in a 1 million token context window, adds native computer use, and ships it as a single model. No switching. No compromising.

There are two versions:

- GPT-5.4 Thinking for advanced reasoning tasks

- GPT-5.4 Pro for high-performance research and enterprise use

Thinking is available to all paid ChatGPT subscribers. Pro remains gated to ChatGPT Pro and Enterprise accounts.

This post breaks down what actually changed, what the benchmarks mean in practice, and who should be paying close attention.

The Unification Story

To understand why GPT-5.4 matters, it helps to understand the problem it solves.

OpenAI spent much of 2025 shipping specialist models.

- GPT-5.2 was their reasoning and writing flagship

- GPT-5.3 Codex was engineered from the ground up for software development

Both were strong in isolation.

This created real friction for teams trying to build with AI. Developers who needed coding help and smart document generation had to manage two models, two prompting styles, and accept the gaps in between.

Anthropic had already solved this with Claude Opus 4.6. Opus combined world knowledge, coding quality, and a consistent personality into one model. GPT-5.4 is OpenAI's direct answer to that strategy.

The broader signal is worth noting. Both Anthropic and OpenAI are converging on the same thesis: the future of frontier AI is a unified model that handles the full surface area of knowledge work, not a collection of specialists. GPT-5.4 is OpenAI's clearest commitment to that direction yet.

What Actually Changed: Five Capabilities Worth Understanding

These are not incremental tweaks. Each one closes a gap that existed in every previous OpenAI model.

Native Computer Use

Previous computer-use workflows required spinning up a separate environment and routing tasks through a different model. GPT-5.4 removes that layer entirely. It directly issues mouse clicks, keyboard inputs, and browser commands against a live operating system.

In practice, this means the model can open Gmail, sort your inbox, draft replies, schedule meetings, and send emails without a single line of integration code. Token use on computer-use tasks has dropped by up to 66% compared to predecessors.

1 Million Token Context

A million tokens means the model can ingest an entire codebase, a full legal document archive, or months of communication history in a single request. Analysis that once required chunking and multiple passes now happens in one.

Upfront Thinking Plans and Mid-Task Steering

Before executing a complex task, GPT-5.4 Thinking lays out an explicit plan. You can review it, redirect it, and adjust before the model starts burning tokens going the wrong direction.

More useful still: you can inject new instructions mid-task. Ask for a 10-source research report, decide midway you need 15 sources, and say so. The model incorporates the change without restarting.

Token Efficiency

GPT-5.4 completes agentic workflows with significantly fewer tool calls. The slightly higher per-token price becomes less relevant in practice because you are spending fewer tokens per completed task overall.

Hallucination Reduction

Individual factual claims are 33% less likely to be incorrect compared to OpenAI GPT-5.2. Full responses are 18% less likely to contain any errors. For knowledge work where accuracy compounds, this is a meaningful shift.

Benchmark Performance

Benchmarks from AI companies require context. Companies choose which evaluations to publish, and the numbers rarely tell the complete story. That said, three results from this launch deserve a closer look.

GDPval

This is OpenAI's in-house benchmark, testing agents across 44 professions in the nine industries that contribute most to US GDP. It measures whether a model can complete tasks that move real work forward, not just answer trivia.

GPT-5.4 Thinking scored 83%, up sharply from 70.9% in GPT-5.2 and five points ahead of Anthropic's Opus 4.6 at 78%. Notably, the Thinking version outscored the Pro version here, which speaks to how well the standard model handles real-world task completion.

OSWorld

OSWorld gives models a live operating system and measures whether they can use it effectively. GPT-5.4 hits 75% accuracy with just 15 tool calls. GPT-5.2 topped out near 50% and needed nearly three times as many calls to get there. Fewer calls means cheaper, faster, and more reliable automation.

FrontierMath

FrontierMath contains problems that regularly stump expert mathematicians. A score above 10% was considered impressive a year ago. GPT-5.4 Pro's 38% is the highest ever recorded on this evaluation.

The honest caveat: Anthropic and Google each decline to publish scores on certain benchmarks, making direct comparisons incomplete. Treat these numbers as directional signals, not final verdicts.

Real-World Use Cases

The benchmark scores describe capability. The use cases describe where that capability shows up in actual work.

Knowledge Workers

GPT-5.4 can take a research topic, run web search across multiple sources simultaneously, and produce a fully formatted 15-slide PowerPoint presentation or a formula-rich Excel workbook from a single natural language prompt. A workflow that once took hours now takes minutes.

Teams in finance, consulting, and operations will see the most immediate ROI. The model respects output formatting, generates working spreadsheet formulas, and cites sources inline.

Developers

GPT-5.4 matches GPT-5.3 Codex on software engineering benchmarks while retaining the broader reasoning of a general model. It can one-shot complex applications, test its own code using computer use in a live environment, and iterate without requiring human review at each step.

The context switch cost between "coding model" and "thinking model" is eliminated. One model handles architecture, implementation, testing, and documentation.

Agentic Workflow Automation

On pricing:

- GPT-5.4 Thinking starts at $2.50 per million input tokens and $15 per million output tokens.

- GPT-5.4 Pro runs at $30 per million input and $180 per million output tokens.

Both are higher than their predecessors on paper, but the efficiency gains on tool-heavy tasks mean total costs frequently come in lower on a per-task basis.

If your workflows are agentic and tool-call-intensive, benchmark your actual spend before assuming the upgrade is more expensive.

GPT-5.4 vs. The Competition

GPT-5.4 leads the current field on GDPval, OSWorld computer use, and advanced mathematics. On those three dimensions, no available model scores higher.

Where it still lags:

- Front-end design quality remains behind Opus 4.6 and Gemini 3.1 Pro. Output tends to be functional and well-structured, but lacks the aesthetic judgment the best Anthropic and Google models bring to UI work.

- Conversational writing tone requires more prompting to get right. Claude and Gemini produce natural, non-generic writing out of the box. GPT-5.4 benefits from specific style guidance before it matches that register.

- Task completion in agentic environments occasionally stalls before finishing long workflows. OpenAI has acknowledged this and is actively working on it.

One thing worth noting on the prompting side: GPT-5.4 responds differently to instructions than Opus 4.6. The internal reasoning architecture is distinct, and prompts optimized for Claude will not transfer cleanly. If you are switching models inside an agentic stack, budget time to rewrite your system prompts for the new model's behavior.

The practical takeaway is clear. No single model wins every category. GPT-5.4 is the strongest general-purpose choice for knowledge work, coding, and agentic automation. For front-end development or content requiring a strong creative voice, Opus 4.6 is still worth reaching for.

Conclusion

Something meaningful has shifted in how these models are being built and shipped.

Both Anthropic and OpenAI have refined their pre-training pipelines to the point where new frontier models arrive on a near-monthly cadence. The specialist model era is effectively over. The unified model era has started.

GPT-5.4 is the most capable general-purpose model available today on the benchmarks that matter most for real work. Whether that holds for three months or three weeks is genuinely uncertain given the current pace of releases.

For product and engineering teams, the more important question is not which model is currently ahead. It is whether your infrastructure can adapt quickly when the answer changes. The teams that are winning with AI right now are not betting on one model. They are building systems that can swap models in and out as the landscape shifts.

GPT-5.4 is a strong signal that it is time to reassess workflows built around 2024-era model assumptions. The tools have moved. The baseline has moved. The only question is whether your process has moved with them._

Frequently Asked Question

Here is what else you and your fellow AI enthusiasts should know about this new model.

More topics you may like

GPT-5.1 vs GPT-5: Key Differences and Improvements

Faisal Saeed

GPT-5.2 Is Here: What Changed, Why It Matters, and Who Should Care

Faisal Saeed

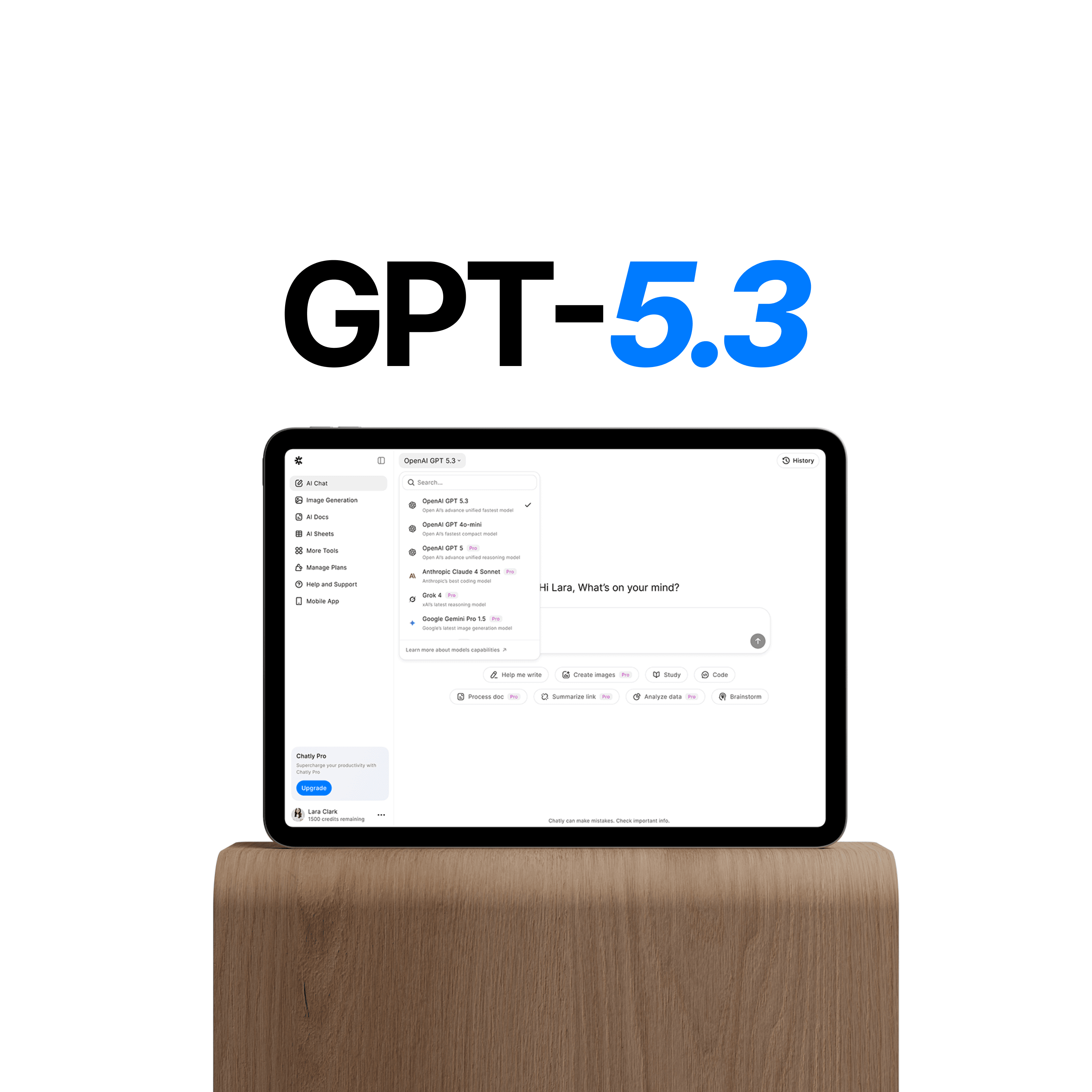

GPT-5.3 ("Garlic") Release Timeline & Expected Features: What We Know So Far

Daniel Mercer

Gemini 3.1 Pro: What It Is, What Changed, and What It Means for You

Maya Collins

Claude Opus 4.6: New Features, Improvements, and Benchmark Performance

Elena Foster