How to Turn Raw User Feedback into a Product Roadmap with AI?

Product managers do not have a feedback problem. They have a processing problem. The interviews, support tickets, NPS responses, and app store reviews exist in abundance, but what does not exist is a reliable, repeatable way to turn all of it into roadmap decisions without losing a week to manual analysis.

By the end of this guide, you will have a step-by-step AI workflow that takes raw, unstructured feedback and produces a prioritised, timeline-structured product roadmap you can walk into a stakeholder meeting with.

From Raw Feedback to Roadmap: An AI-Powered Workflow for Product Managers

User feedback arrives constantly through interviews, support tickets, surveys, and reviews, but it is unstructured, scattered, and time-consuming to process manually. The real challenge in product discovery is not collecting feedback but converting it into clear roadmap decisions that teams can align around and that stakeholders can defend. The workflow below runs in two phases:

- The first handles feedback preparation. Centralising, cleaning, clustering, and translating raw input into validated product opportunities.

- The second is roadmap construction. Structuring those opportunities into a defensible, timeline-driven plan with reasoning that holds up when priorities get questioned.

Each step builds on the previous one, so running them in sequence produces cleaner output at every stage.

Step 1: Collect and Centralise Feedback

The first step is bringing feedback from every channel into one place before any analysis begins. Feedback scattered across Slack threads, Notion docs, and spreadsheets cannot be clustered consistently because each source carries a different format and level of detail.

The channels worth pulling from typically include:

- Support tickets and customer service conversations

- User interview notes and research transcripts

- NPS open-text responses and survey comments

- App store reviews and public review platforms

- In-product feedback and feature request submissions

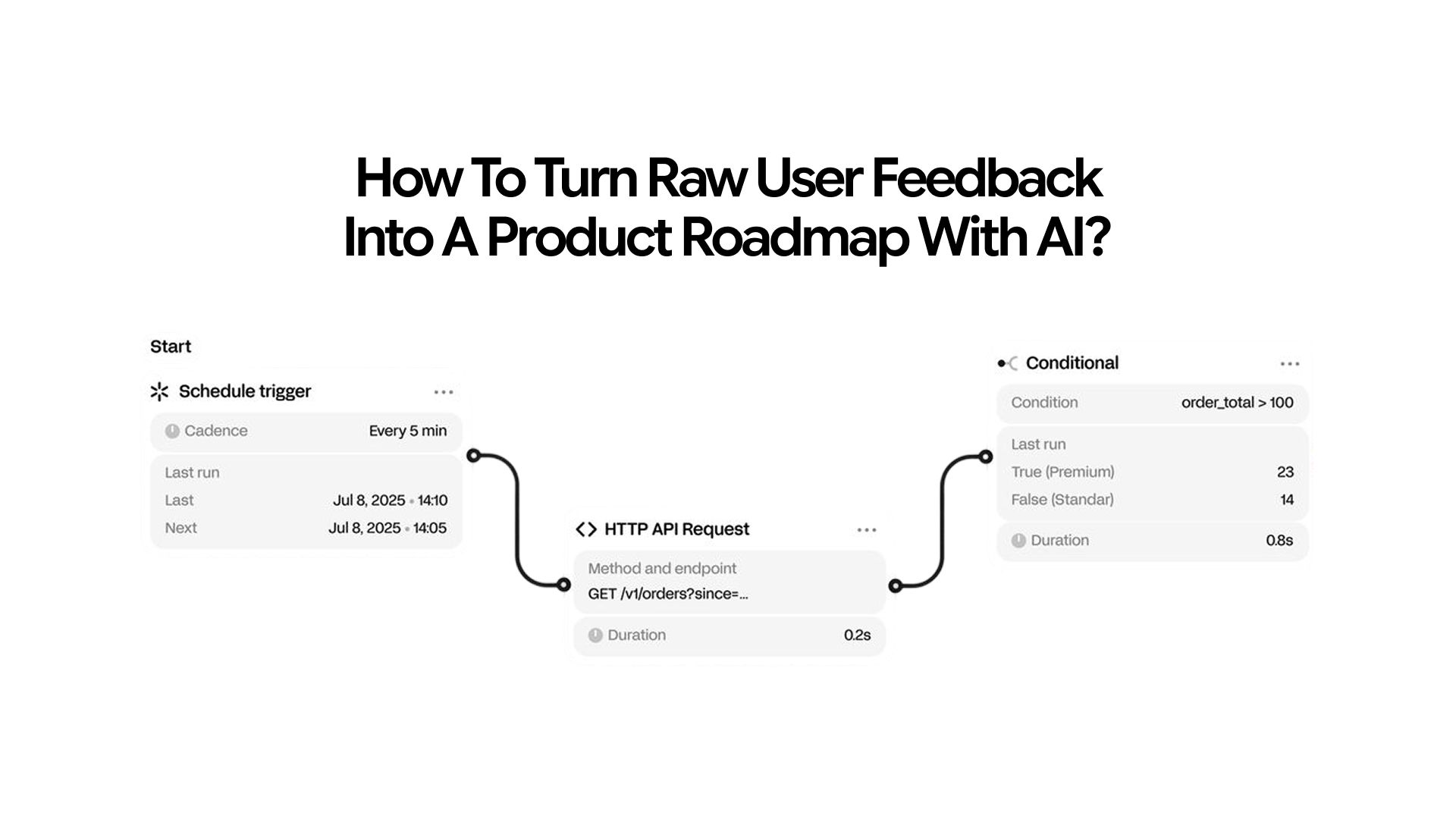

Traditionally, teams had to manually export feedback from different tools and paste it into a single document, which was slow and repetitive. Modern AI assistants now use connectors and MCP (Model Context Protocol) integrations to remove this friction entirely.

- Connect AI directly to tools like Slack, Notion, Intercom, and Linear

- Pull feedback from all tools using a single prompt instead of manual exports

- Eliminate the need to switch between multiple platforms

- Reduce a multi-hour collection process into an automated or near-instant workflow

Once consolidated, paste the raw feedback into AI and use this prompt to clean it before any clustering begins:

"Here is a batch of raw user feedback: [paste]. Remove duplicate entries, flag any that are too vague to act on, and rewrite each unique piece as a single clear sentence capturing what the user is asking for or complaining about."

A summary generator works well here for compressing long batches of interview notes or survey responses into concise, scannable points. If feedback lives in uploaded documents or PDFs, the Chat PDF feature lets you query it directly without copying everything into a prompt.

Step 2: Cluster Feedback into Themes

With clean summaries in hand, the next step is grouping them by the underlying issue each one describes. This thematic clustering is where patterns in user research become visible across the full dataset rather than in individual responses.

- Paste summaries into AI and ask it to cluster them into themes

- For each theme, include a short label, the number of feedback items, and 2–3 representative examples

Before moving on, validate the output carefully.

- First, confirm that AI has separated feature requests from real problems, since a requested feature is not always the actual underlying need.

- Second, review the representative examples for each theme yourself, because AI often groups items based on surface similarity and can mix different problems that look related.

Teams using dedicated user research tools like Dovetail can run this clustering natively within the platform, and for teams without one, running this step in AI from a document works just as effectively.

For more details about how AI chat helps in feedback collection, read: How AI Chat Helps with Survey & Feedback Collection

Step 3: Translate Themes into Product Opportunities

Once themes are validated, the next step is translating each one from a problem description into a product opportunity. A theme describes what users are struggling with, and a product opportunity reframes that struggle into something specific enough for a team to scope and build against.

- "Users are dropping off during onboarding" becomes "a redesigned onboarding flow that reduces time to first value"

- "Users cannot find the export feature" becomes "a navigation restructure or in-app search improvement"

- "Reports take too long to generate" becomes "background processing with email delivery or progress indicators"

Ask AI to make that translation across all validated themes:

"Here are the top themes from our user feedback: [list]. For each theme, identify the underlying user need it represents, suggest one or two potential product responses, and flag whether this looks like a widespread need or an edge case based on the frequency data."

The edge case flag matters because a theme mentioned in three pieces of feedback out of five hundred is a data point worth noting, not a product priority. AI surfaces which themes have enough signal to warrant serious consideration, but the judgment about what that signal actually means for your product still belongs to the PM.

For adding market context to a specific opportunity, such as whether a pain point is industry-wide or specific to your user segment, the AI search engine retrieves current information without leaving the workflow.

Step 4: Prioritise with a Scoring Framework

With a validated list of product opportunities, the next step is scoring them against a structured framework. RICE (Reach, Impact, Confidence, Effort) and ICE (Impact, Confidence, Ease) both create the structure needed to compare ideas objectively and prevent gut feel from overriding the process.

AI does not supply the estimates since those need to come from people who know the users and the business, but it applies the framework consistently once you feed it the inputs.

Run RICE as an interactive session rather than a one-shot prompt, which forces you to think through each dimension individually rather than estimating all four at once:

"Help me score the following product opportunities using the RICE framework. For each one, ask me for Reach, Impact, Confidence, and Effort. After I answer, calculate the RICE score and rank the opportunities."

Once scored, ask AI to flag high-impact, lower-effort items as near-term candidates and identify any opportunity where the estimated effort feels mismatched with the size of the problem it addresses.

It is worth noting what RICE scores do not account for:

- Strategic alignment with company-level goals

- Technical dependencies between items

- Current team capacity

The scores create structure and surface tradeoffs, but they inform the conversation rather than replace it. The AI for product managers guide covers RICE scoring and common prioritisation mistakes in more depth if you want to go deeper on this step.

Step 5: Structure the Roadmap Timeline

With scored opportunities in hand, the next step is organising them into a timeline that reflects both priority and readiness. Use this prompt to generate the initial structure:

"Here are our prioritised product opportunities with RICE scores: [list]. Organise them into short-term (next quarter), mid-term (next two quarters), and long-term (beyond six months) based on the scores, dependencies, and strategic fit. For each bucket, write a one-paragraph rationale explaining the sequencing."

The three buckets work as follows:

- Short-term (next quarter) covers high-confidence, high-impact items that are well-scoped, free of major dependencies, and ready to move into planning. These are typically the opportunities that scored highest on RICE and represent the clearest signal from the feedback dataset.

- Mid-term (next two quarters) covers validated opportunities that need further scoping, design exploration, or dependency resolution before they can move forward. They have a strong signal but are not yet ready for resourcing decisions.

- Long-term (beyond six months) covers strategic bets and lower-confidence opportunities worth monitoring but not yet worth committing engineering time to. These often represent themes with a strong qualitative signal but insufficient frequency data to prioritise now

Step 6: Write the Rationale for Every Sequencing Decision

A list of items with delivery dates can always be challenged, but a roadmap that explains why item A comes before item B is significantly harder to argue against. This is the step most product teams skip, and it is also the reason most roadmaps fall apart in stakeholder reviews.

For each bucket, the rationale should address four things:

- How many users raised the issue, and how severely did they describe it?

- Does the opportunity advance a company-level goal or OKR?

- Are there dependencies that must ship before this item?

- Is the estimated build effort proportionate to the scale of the problem being solved?

Ask AI to draft the rationale once the structure is in place:

"Here is our roadmap structure with RICE scores and bucket placement: [paste]. For each bucket, write a one-paragraph rationale that covers signal strength, strategic fit, dependencies, and effort-to-impact ratio."

The written rationale is also what makes the roadmap useful as a reference document long after the planning meeting ends. When a priority gets questioned three months later, the reasoning is already on record.

Step 7: Produce Cross-Audience Versions

A roadmap presented to engineering looks different from one presented to a board or a customer success team. The underlying priorities are the same, but the framing and level of technical detail need to change depending on who is in the room. Once the base roadmap and rationale are set, ask AI to adapt it:

"Here is our product roadmap with rationale: [paste]. Write two versions, one for an engineering audience that includes dependency logic and sequencing reasoning, and one for a non-technical stakeholder audience that focuses on user impact and business outcomes."

Step 8: Treat the Roadmap as a Living Document

A roadmap built from user feedback is not a delivery contract. It is a structured hypothesis about what to build next, and it should be revisited as new information comes in.

Revisit the roadmap when a major feature ships and new feedback comes in response to it, when a new support ticket category starts growing quickly, or when a planning cycle is approaching, and priorities need to be revalidated.

A monthly or quarterly cadence works for most teams. For building prompt templates you can reuse across each step of this workflow, the system prompts guide covers how to structure prompts that produce consistent output across sessions.

Common Mistakes to Avoid

Even with AI speeding up analysis, most breakdowns happen in how the workflow is used rather than the tools themselves:

- Skipping centralisation. Fragmented, multi-source feedback produces inconsistent clustering. Bringing everything into one place before running any AI analysis takes extra time but produces cleaner results at every step downstream.

- Accepting AI theme labels without reading the examples. AI groups’ feedback by surface-level similarity and occasionally clusters things that look related but reflect different problems. Reading the representative examples is what catches those misclassifications before they reach the roadmap.

- Treating AI opportunity framing as decisions. When AI translates a theme into a product opportunity, it is generating a hypothesis. Validating whether it addresses the real underlying need still requires user conversation and product judgment.

- Using RICE scores as the final answer. Scores create structure, but they do not account for strategic alignment, technical feasibility, or team capacity. The scores inform the conversation rather than replace it.

- Building a roadmap without written rationale. A prioritised list without reasoning is just a list. The rationale is what makes the roadmap defensible in reviews and useful as a reference when priorities are later questioned.

Conclusion

Turning user feedback into a roadmap is less about collecting more data and more about creating a clear path from noise to decisions. AI speeds up the hard parts, such as summarising, grouping, and structuring information, but the value still comes from how product teams interpret and refine that output.

When feedback is consistently processed into themes, opportunities, and prioritised items with clear reasoning, roadmap decisions become easier to explain, defend, and execute.

Frequently Asked Questions

More about turning user feedback to product roadmap

More topics you may like

AI for Developers: Code Review, Debugging, Documentation, and Integrated Workflows

Arooj Ishtiaq

AI for Frontend Engineers: Component Generation, Refactoring, and Accessibility

Arooj Ishtiaq

AI for Product Managers: How to Use AI for PRDs, Research Synthesis, and Prioritisation?

Arooj Ishtiaq

How to Onboard onto an Unfamiliar Codebase Using AI?

Arooj Ishtiaq