Moonshot AI Introduces Kimi K2.5: A Worthy Successor to Kimi K2?

It seems like yesterday when everyone was gushing over Kimi K2. I remember people calling it all sorts of things. The Deepseek moment. The turning point for open-source AI from China.

While some praised its affordability and features, there were some who complained about speed and smaller context window. Hence, a follow-up was imminent.

And that just happened.

Yesterday, Moonshot AI dropped Kimi K2.5, and the benchmarks are turning heads across the developer community. If you think Kimi K2 or its Thinking variant were remarkable, wait till you find out what Kimi K2.5 brings to the table.

So, let's unpack this new AI giant and have a look under the hood to see what makes this new model so effective and impressive.

What Makes Kimi K2.5 Different

Kimi K2.5 represents Moonshot AI's most ambitious release yet, achieving "open-source SoTA performance" across agents, coding, and visual understanding. It's built from the ground up as a native multimodal system, not a text model with vision bolted on.

More importantly, it delivers this performance at 16-25x lower cost than Western competitors.

The model sits within the Kimi family just like Claude’s Opus 4.5, Sonnet 4.5, and Haiku 4.5 variants. K2.5 targets the sweet spot between raw power and practical deployment. It is smart enough for complex tasks but efficient enough for production use.

The Core Capabilities You Need to Know

Kimi K2.5 comes jam-packed with new and improved features and capabilities. Understanding them is crucial for evaluating whether K2.5 fits your use case. Each represents a significant leap from its predecessor.

1. Native Multimodal Architecture

K2.5 processes images and video as naturally as text, supporting images in png, jpeg, webp, and gif formats. Video support extends to mp4, mpeg, mov, avi, flv, mpg, webm, wmv, and 3gpp. This delivers deep visual understanding for analyzing layouts, extracting chart data, and comprehending video narratives.

The model handles resolution up to 4K for images and 2K for video. Anything beyond these thresholds wastes processing time without improving accuracy.

Key visual capabilities:

- Dynamic token calculation: Processing costs scale with content complexity

- Context-aware analysis: Understanding spatial relationships beyond object detection

- Multi-frame video comprehension: Keyframe extraction ensures temporal understanding

- Document intelligence: Extract structured data from invoices, forms, and reports

2. Breakthrough Coding Performance

Kimi K2.5 scored 76.8% on SWE-bench Verified and 73.0% on SWE-bench Multilingual. These scores, while trailing by 3-4%, compete directly with GPT-5.2 and Claude Opus 4.5.

The breakthrough centers on frontend development, where K2.5 generates production-ready code with complex effects like dynamic layouts, scroll animations, and responsive breakpoints.

The model excels at full-stack scenarios, maintaining context across backend logic, API design, database schemas, and frontend implementation. When debugging, K2.5 traces errors across multiple files and suggests fixes accounting for the entire system architecture.

3. Ultra-Long Context Window

The biggest complaint about Kimi K2 was its context window. Moonshot AI fixed it.

K2.5 provides 256K tokens of context which is roughly 192,000 words or 384 pages of single-spaced text. This capacity handles about 200MB of text content per request.

So, you do not have to divide your tasks into chunks anymore. Long context eliminates chunking and retrieval strategies that introduce latency and accuracy issues. You can process entire codebases, multi-chapter documents, or comprehensive datasets in a single prompt.

4. Advanced Reasoning with Thinking Mode

Moonshot AI’s latest model supports two operational modes controlled via the thinking parameter.

- Standard mode optimizes for speed and cost.

- Thinking mode enables multi-step reasoning for complex problems.

Thinking mode breaks down challenges, evaluates intermediate results, and adjusts approaches before final answers. It's crucial for mathematical proofs, complex logic, architectural decisions, and multi-step problem decomposition. The model can invoke tools multiple times, analyze outputs, chain operations, and backtrack when needed.

The trade-off is computational cost and response time. For simple queries like text reformatting, disable thinking mode to minimize costs.

5. Agent Orchestration and Subagent Swarms

K2.5 can dynamically create specialized subagents, assign tasks, and synthesize outputs. The orchestrator analyzes complex tasks, identifies required specializations (AI Researcher, Physics Researcher, Fact Checker, Web Developer), creates subagents, distributes work, and aggregates results.

Agent orchestration enables:

- Research synthesis: Deploy specialized agents for different domains, then merge insights

- Multi-step verification: Fact-checking agents validate content generation claims

- Parallel task execution: Agents work simultaneously on independent subtasks

- Iterative refinement: Agents critique each other's work and iterate toward higher quality

Benchmark Performance Analysis

K2.5's benchmarks demonstrate competitive performance across categories. Let's examine results versus GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro.

Agent Tasks

Kimi K2.5 outscored other AI models in most of the categories that matter.

- K2.5 scored 50.2% on Humanity's Last Exam versus competitors' 43.2-45.8% which is a significant advantage.

- On BrowseComp, K2.5 hit 74.9% against 57.8-65.8%, a commanding lead being 8% clear on the nearest competitor.

- DeepSearchQA shows K2.5 at 77.1% compared to 63.2-76.1% for OpenAI,Claude, and Gemini’s best models.

These are meaningful gaps translating to real-world capability differences. K2.5's consistent lead across agent benchmarks suggests fundamental advantages in multi-step reasoning and tool use.

Competitive Coding Performance

Kimi K2.5 comes marginally short when it comes to coding. However, when you consider the price difference between the models, the difference does not sound too big.

SWE-bench Verified:

- K2.5 76.8%

- OpenAI: 80.0%

- Claude: 80.9%

- Gemini: 76.2%

SWE-bench Multilingual:

- K2.5: 73.0%

- OpenAI: 72%

- Claude: 77.5%

- Gemini: 65%

The takeaway is parity rather than dominance. K2.5 competes at the highest level without defining a new ceiling.

For most workflows, these differences won't materially impact productivity. The more relevant question is cost per task, where K2.5's pricing advantage becomes decisive.

Vision Capabilities

In terms of image and video understanding, Kimi K2.5 significantly outperforms its predecessors and now offers capabilities as good as, if not better than, top AI models.

In terms of image:

-

MMMU Pro

a. K2.5: 78.5%

b. OpenAI: 79.5%

c. Claude: 74%

d. Gemini: 81% -

MathVision

a. K2.5: 84.2%

b. OpenAI: 83%

c. Claude: 77.1%

d. Gemini: 86.1% -

OmniDocBench

a. K2.5: 88.8%

b. OpenAI: 85.7%

c. Claude: 87.7%

d. Gemini: 88.5%

In terms of video:

-

VideoMMU

a. K2.5: 86.6%

b. OpenAI: 85.9%

c. Claude: 84.4%

d. Gemini: 87.6% -

LongVideoBench

a. K2.5 79.8%

b. OpenAI: 79.5%

c. Claude: 74%

d. Gemini: 81%

The pattern shows consistent high performance without dominating every category. This suggests well-balanced multimodal architecture rather than benchmark-specific optimization.

Kimi K2.5 vs K2

Understanding the jump from K2 to K2.5 requires looking beyond version numbers. The architectural changes represent months of fundamental research, not just parameter tuning.

Architecture Improvements

K2.5 features a redesigned multimodal encoder that processes visual information more efficiently. The Kimi K2 model treated images as compressed token sequences, which worked but created bottlenecks in complex visual reasoning.

K2.5's native multimodal architecture eliminates this compression step, allowing the model to maintain higher-fidelity visual representations throughout inference.

The context window remains at 256K tokens, but K2.5 handles this capacity more efficiently. Internal benchmarks show 23% faster processing on long-context tasks compared to K2. This improvement comes from architectural changes in attention mechanisms, not just hardware optimization.

New Capabilities

Agent orchestration is entirely new to K2.5. K2 could use tools and follow multi-step procedures, but it couldn't dynamically create specialized subagents. This capability opens up qualitatively different use cases. For example, workflows that require parallel specialized processing weren't feasible before. They are now.

The thinking mode toggle is another addition. K2 always operated in a single mode, which meant you paid for deep reasoning even on simple tasks. Kimi k2 Thinking came months later in November.

K2.5 offers both modes instantly. Its dual-mode operation lets you optimize for speed or depth depending on your needs.

Video understanding received significant upgrades. K2 could process video but struggled with long-form content and temporal relationships. K2.5's improved keyframe extraction and temporal modeling make it substantially better at following narratives and understanding sequences.

Performance Gains

Across the benchmark suite, K2.5 shows 12-18% improvements over K2 on agent tasks. Coding benchmarks improved 8-15%, with the largest gains in frontend development and UI generation.

Vision tasks saw 10-14% improvements, particularly on document understanding and video analysis.

A 10% improvement in code generation might mean the difference between generating code that needs light editing versus substantial refactoring. In agent tasks, 15% better performance often separates reliable automation from systems that require constant human intervention.

Technical Implementation: Getting Started

The API is OpenAI-compatible, minimizing switching costs. Let's walk through practical implementation details.

API Setup and Authentication

Install OpenAI SDK: pip install --upgrade 'openai>=1.0'.

Verify: python -c 'import openai; print("version =",openai.__version__)'.

Set API key: export MOONSHOT_API_KEY='your-key-here'.

Initialize client pointing to https://api.moonshot.ai/v1.

The SDK handles everything else identically. Test with simple completion before production workloads.

Working with Images

Image processing requires base64 encoding.

- Read the image

- Encode with

base64.b64encode() - construct:

data:image/[extension];base64,[encoded-data]. - Pass a list to

contentfield. Oneimage_urlpart, onetextpart.

Critical image constraints:

- Resolution ceiling: 4K maximum (4096×2160)

- Formats: png, jpeg, webp, gif only

- No URL support: Must use base64, can't pass HTTP URLs

- Request body limit: 100MB total size

Working with Video

Video follows image patterns with one critical difference—large files require file upload API instead of base64. The 100MB limit makes inline encoding impractical for most video.

For smaller videos, use: data:video/[extension];base64,[encoded-data]. Content structure mirrors image requests.

Video best practices:

- Resolution target: 2K maximum (2048×1080)

- Upload for reuse: Use file upload for repeated references

- Token estimation: Check consumption before processing

- Format support: mp4, mpeg, mov, avi, flv, mpg, webm, wmv, 3gpp

API Parameters

K2.5 uses mostly standard parameters with several fixed values.

max_tokensdefaults to 32,768.- Temperature and sampling are locked. Thinking mode uses

temperature=1.0andtop_p=0.95, non-thinking usestemperature=0.6.

The thinking parameter controls reasoning: {"type": "enabled"} or {"type": "disabled"}. Default is enabled. Other fixed parameters: n=1, presence_penalty=0.0, frequency_penalty=0.0.

Pricing and Cost Optimization

K2.5's pricing differs dramatically from Western competitors.

- Input costs $0.60 per million tokens

- Output $3.00 per million

- Cache hits only cost $0.10 per million

The 256K context window costs roughly $153 at cache-miss rates, or $25 with cache hits. Maximum output runs about $96. A typical full-context conversation might cost $200-250 without caching, $75-100 with optimization.

Structure prompts to maximize caching by repeating common elements. System instructions, reference documents, and code bases should appear identically across requests. Moonshot's caching recognizes repeated content regardless of position.

Use token estimation API before expensive requests. Selectively enable thinking mode based on complexity. Batch similar requests to maximize cache hit rates.

Real-World Use Cases

Let's ground this in practical applications where K2.5 delivers measurable value.

Software Development

K2.5 excels in full-stack scenarios. Feed it requirements and architecture diagrams, get database schemas, API endpoints, and frontend components. Code review benefits from 256K context as you can now upload entire repositories to trace bugs across files.

Frontend development is particularly strong. Describe dashboard layouts, specify component libraries, get production-ready code with responsive design and accessibility. Complex animations come out correctly more often than competitors.

Document and Video Processing

Long-form document analysis leverages ultra-long context. Upload contracts, research papers, or specifications for targeted questions requiring synthesis across hundreds of pages. K2.5 extracts sections, identifies contradictions, and maintains awareness of distant context.

Video content analysis enables new workflows. Upload product demos for structured documentation. Process training videos for searchable transcripts with semantic understanding. Analyze user research sessions for insights and pain points.

Research and Analysis

Agent orchestration makes complex research practical. Assign subagents to gather data, verify facts, analyze trends, and synthesize findings. The orchestrator ensures consistency and handles conflicts between sources.

Academic research benefits from multi-domain synthesis. Process complete papers from different fields, identify common themes, and propose novel connections. Long context eliminates working from abstracts only.

Enterprise Automation

Document processing automates tedious workflows. Extract data from invoices, purchase orders, and receipts regardless of format variations. K2.5's vision handles scanned documents and digital PDFs with equal accuracy.

Customer support uses agent swarms effectively. One agent analyzes queries, another searches knowledge bases, a third drafts responses, a fourth checks policy compliance. The result is accurate, on-brand support with less human intervention.

Data analysis combines code generation and reasoning. Upload datasets, describe goals, and K2.5 writes analysis code, executes it, interprets results, and generates visualizations. For exploratory analysis, this dramatically reduces iteration time.

Limitations and Considerations

K2.5 is strong but it’s not, by any stretch of imagination, perfect. It has genuine constraints you should understand before production deployments.

Fixed Parameter Constraints

Locked parameters (temperature, top_p, n, penalties) limit fine-tuning for specific use cases. Single completion limitation (n=1) means multiple API calls for comparing variations. No streaming support for vision requests creates noticeable latency.

Technical Limitations

100MB request body limit constrains video processing. Base64-only image support requires downloading and encoding before requests. No direct URL support adds complexity compared to URL-based systems.

When K2.5 Might Not Fit

Real-time applications with sub-second latency requirements struggle with processing time, especially for vision and thinking mode. Highly specialized domain tasks might benefit from fine-tuned smaller models. Cost-sensitive, high-volume text generation needs careful ROI analysis at $3 per million output tokens.

Conclusion

Kimi K2.5 is a combination of native multimodal architecture, agent orchestration, and 256K context that creates genuinely novel capabilities beyond incremental improvements.

Frequently Asked Question

Need more information? Here are some queries from fellow AI enthusiasts about Kimi K2.5 and it's features.

More topics you may like

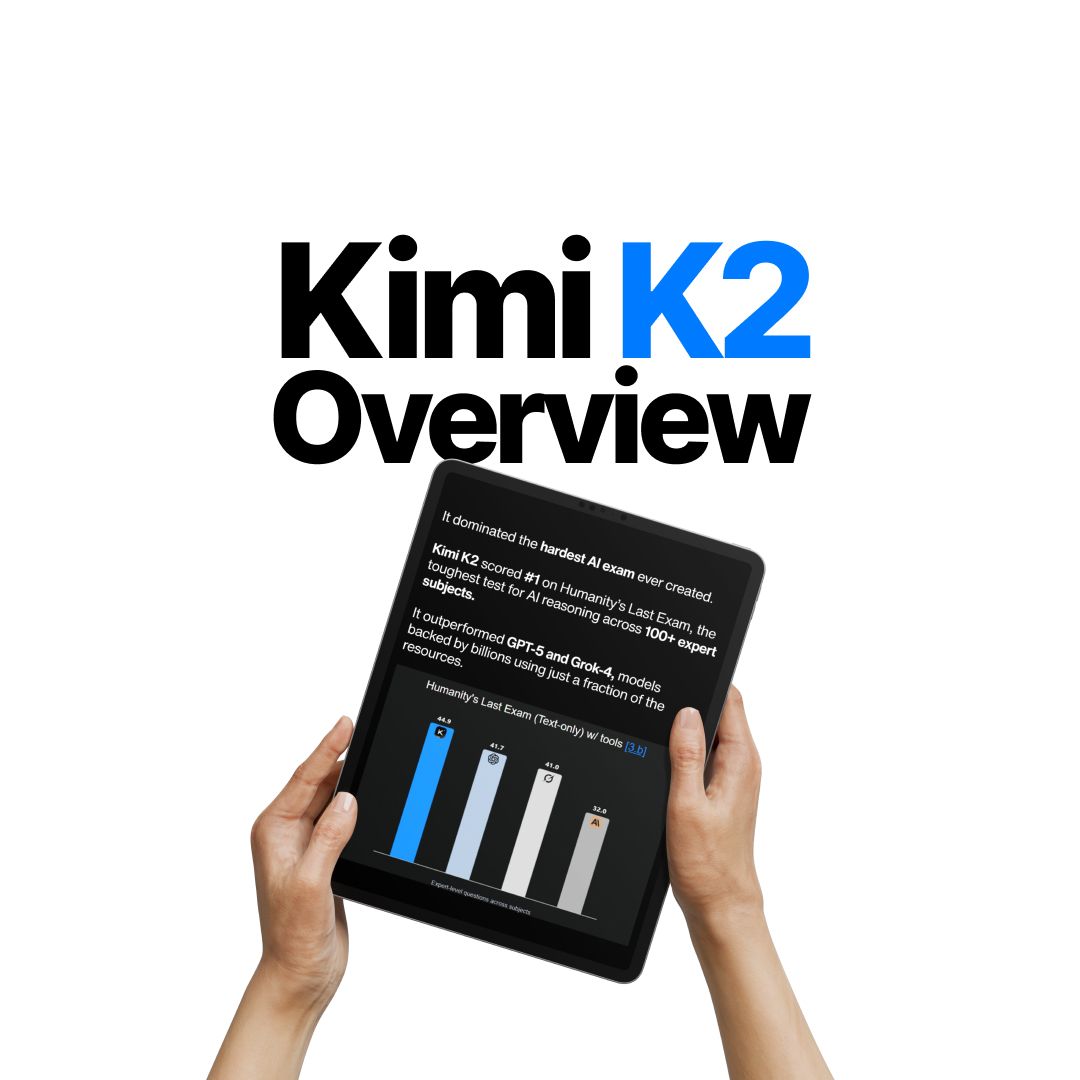

Kimi K2 Overview: Complete Guide to the Open-Source AI That Beats GPT-4.1 & Claude

Faisal Saeed

GPT-5.1 Pricing Explained: How Much Does It Cost?

Faisal Saeed

GPT-5.2 Is Here: What Changed, Why It Matters, and Who Should Care

Faisal Saeed

Gemini 3 Pro Overview: Features, Pricing, and Use Cases

Faisal Saeed

Claude Opus 4.5: The Definitive Guide to Features, Use Cases, Pricing

Faisal Saeed