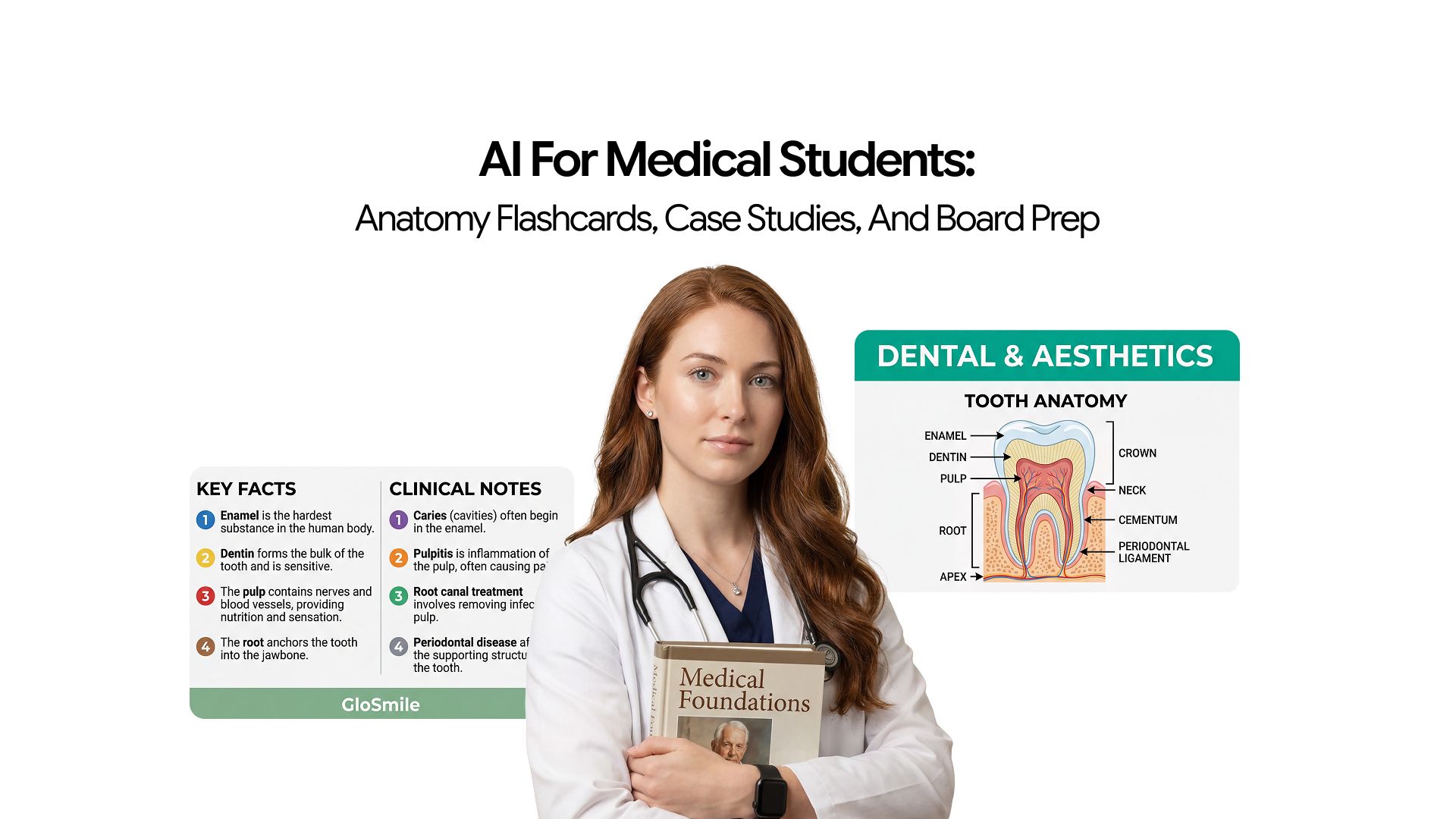

AI for Medical Students: Anatomy Flashcards, Case Studies, and Board Prep

The second year of med school is intense. You have Anki cards, case work, and boards coming up fast. The workload is high and time is limited. AI will not study for you, but it can take care of the low-value, time-heavy tasks that slow you down.

In this guide, you will see how AI helps medical students build anatomy flashcards, break down case studies, and prepare smarter for board exams.

How AI Helps Medical Students

AI helps reduce operational workload in three key areas:

- Building anatomy flashcards

- Working through clinical cases

- Preparing for board exams

It does not replace textbooks, question banks, or supervised clinical training. Instead, it cuts down the time spent turning raw material into study-ready formats, which is where most time is lost before actual learning even begins.

Any AI-generated medical content must always be verified against reliable, authoritative sources before use.

AI for Anatomy Flashcards

AI for Anatomy flashcards becomes the first major test of whether a student’s study system can scale. The brachial plexus, cranial foramina, femoral triangle, and inguinal canal are all manageable in isolation. However, when combined with every nerve, vessel, muscle origin, and insertion across all regions of the body, the real challenge is not difficulty but scale.

The key issue is that:

- Each concept is simple on its own

- The total volume of anatomy content is extremely large

- Retention across the entire body becomes the main obstacle

Active recall and spaced repetition are effective methods for high-volume memorisation. However, the bottleneck is not awareness of these methods. It is the lack of enough well-structured, clinically relevant flashcards to make them work effectively.

In practice:

- Building flashcards manually from lecture slides takes a significant amount of time

- Studying the content itself takes almost the same amount of time

This is where the scale problem becomes most visible in AI for Anatomy flashcards.

Converting Content into Recall Format

AI converts textbook passages and lecture notes into question-and-answer pairs directly, fast enough that cards can be generated the same day a lecture is attended, while the material is still fresh enough to catch errors in the output.

The clinical correlation instruction is what separates useful anatomy cards from cards that test recall in the wrong direction.

A card asking "What is the nerve supply of the deltoid?" tests isolated recall. A card asking "a patient cannot abduct their arm beyond 15 degrees after a shoulder dislocation — which nerve is involved and why" tests applied recall. Board questions are the second kind.

Prompt for clinically relevant anatomy flashcards:

"Convert these anatomy notes into Q&A flashcard pairs. For each structure, create separate cards for: location and boundaries, key relationships to adjacent structures, nerve supply, blood supply, and one clinical correlation connecting this anatomy to a patient presentation or injury pattern. [paste notes]"

For anatomy content in uploaded PDFs, lecture slides, or scanned notes, the Chat PDF feature queries the material directly and generates cards from specific sections without manually copying content into a prompt.

Organising High-Yield Knowledge

Not all anatomy appears on boards with equal frequency. The structures that dominate exam content reflect specific clinical relevance:

- Common peripheral nerve injury patterns and their motor and sensory deficits

- Structures at risk during specific surgical approaches

- Anatomical relationships that explain clinical presentations like hernias, compartment syndromes, or referred pain

- Dermatomes and myotomes are tested in the neurological examination questions

- Foraminal contents of the skull base and their clinical correlations

After generating a card set from lecture content, ask AI to filter it: "Which of these structures are highest yield for USMLE Step 1 and which are unlikely to appear in board-format questions?" This prioritises the deck before it enters Anki.

Anki with the AnKing deck enables spaced repetition at the scale needed for medical school. The AnKing deck includes over 30,000 USMLE-focused cards organised by First Aid chapters.

Most students use a hybrid approach:

- AnKing for broad USMLE coverage

- AI-generated cards for course-specific content

However, pre-built decks like AnKing do not fully cover deeper curriculum details, which is why custom AI-made cards are often added.

The summary generator condenses dense anatomy chapters into the key facts before flashcard generation, which is useful when the lecture was thin and the textbook is the primary source.

Improving Retention Efficiency

The most common flashcard failure is cards that are too broad to function as retrieval practice. "Describe the brachial plexus" cannot be answered in a timed recall exercise. "Which trunk of the brachial plexus gives rise to the musculocutaneous nerve?" can be. The specificity of the question determines whether the card drives genuine recall or passive re-reading dressed up as active study.

Two card types worth generating explicitly for anatomy:

- Reverse cards — given a clinical presentation or loss of function, identify the structure. This test recalls the direction clinical reasoning actually uses, from presentation to anatomy, rather than anatomy to definition

- Comparison cards — given two similar structures, such as the median and ulnar nerves, identify the key differences in distribution, injury patterns, and clinical findings. These prevent the board exam failure pattern of confusing closely related structures under time pressure

Additional card types that cover anatomy systematically and appear frequently on boards:

- "What passes through" cards — for foramina, spaces, and tunnels, listing the structures and their clinical significance when blocked or damaged

- Embryological origin cards — connecting anatomical structures to their developmental origin for questions where congenital anomalies drive the clinical picture

- Surface anatomy cards — connecting internal structures to external landmarks used in clinical examination

AI for Clinical Case Studies

AI for clinical reasoning develops through one repeated cognitive process: encounter a clinical presentation, generate a broad differential diagnosis, work through discriminating features, narrow to the most likely diagnosis, and identify the next step.

The problem in preclinical years is that supervised access to this process is limited, and textbook cases feel passive because the reasoning structure is never made explicit.

AI for clinical reasoning acts as an interactive case partner that:

- Walks through diagnostic logic step by step

- Makes the reasoning process visible

- Supports understanding without replacing clinical exposure that builds genuine judgment

Structuring Case Information

Every clinical case contains relevant information and noise. Learning to separate them quickly is the foundation of clinical reasoning.

A 45-year-old with hypertension, a productive cough for three days, a fever of 38.9°C, right lower lobe consolidation, and a smoking history presents differently from a 70-year-old on immunosuppressants with the same findings. Identifying which features are discriminating and which are incidental is the skill that case practice develops.

Paste a case into AI and ask it to organise the information before reasoning begins:

- Chief complaint with duration and onset

- History features that raise or lower the probability of specific diagnoses

- Examination findings with their clinical significance explained

- Investigation results and what each one confirms or rules out

- Red flag features that change the urgency or direction of management

For cases that come from uploaded clinical PDFs, textbook case collections, or scanned handouts, the Chat PDF feature lets you query the document directly and extract the structured case information without copying it manually.

Supporting Diagnostic Reasoning

Most students short-circuit differential diagnosis by jumping to the most obvious or most recently studied condition. AI models the process of generating a broad differential and narrowing it systematically, which is the exact reasoning pattern that USMLE and clinical exams test.

Work through cases interactively rather than asking AI to solve them in one step:

- Start with only the chief complaint and ask for an initial broad differential

- Add history features one at a time and ask how each one shifts the differential

- Add examination findings and ask what each confirms or makes less likely

- Add investigation results and ask what the most likely diagnosis is now, and what the next management step would be

- Ask AI to explain what would need to be different in the case for the second most likely diagnosis to be correct instead

Amboss offers over 700 clinical case simulations built around this same step-by-step framework and is the dedicated clinical reasoning tool most commonly used alongside general AI for this purpose.

Connecting Signs to Physiology

Memorising that peripheral oedema appears in right heart failure is one level of understanding. Knowing why it appears, what drives the haemodynamic sequence, and how that mechanism changes in biventricular failure is the level that allows you to reason through atypical presentations and complex cases.

This is what distinguishes a student who has learned medicine from one who has memorised it.

After working through a case, ask AI to explain the physiological mechanism behind each key finding. Then follow up with the specific questions that extend the mechanism:

- What happens to this finding when the condition progresses?

- What changes the mechanism in a different patient population?

- Which medications work on this mechanism and at which point in the sequence?

- What physical examination finding would you expect to change first as the condition worsens?

The Ask AI app handles these mechanistic physiology questions with the depth and specificity that a broad textbook overview does not deliver when you need a targeted answer to a specific follow-up.

For researching the epidemiology, risk factors, or management guidelines behind a condition that appeared in a case, the AI search engine retrieves current, accurate information without the risk of landing on an unreliable source.

AI for Board Exam Preparation

Board preparation is a prioritisation problem at scale. The USMLE syllabi are enormous, and question distribution across topics is not uniform. Students who perform best are those who identify weak areas, allocate study time accordingly, and use high-yield resources efficiently.

AI for board preparation helps at three key points:

- Organising what to study

- Generating targeted practice material

- Building a revision schedule around actual gaps rather than comfort zones

Organising High-Yield Content by System

Board questions integrate pathology, pharmacology, and physiology within a single clinical vignette. A question about a patient with heart failure tests cardiac physiology, diuretic pharmacology, and fluid overload pathophysiology simultaneously. Studying these as separate subjects rather than as an integrated system is one of the most common structural weaknesses in board preparation.

First Aid remains the primary high-yield reference. AI is most useful for elaborating on it rather than replacing it:

- Paste a First Aid entry and ask AI to expand on the underlying mechanism

- Ask AI to connect the entry to a clinical vignette format that mirrors how it will appear on boards

- Ask AI to identify which other First Aid topics are commonly tested alongside this one in the same question

- Ask AI to generate the two or three most commonly tested wrong answer choices for this topic and explain why they are wrong

For condensing dense First Aid sections or pathology chapters into key facts before generating flashcards or summaries, the summary generator extracts the high-yield points without requiring you to read the full chapter first.

For building structured system-based study guides that consolidate your notes, case summaries, and high-yield content into a single reference document for each exam block, the AI document generator handles that formatting efficiently.

Generating Practice Questions

Active recall through practice questions is significantly more effective for board preparation than passive review. AI generates USMLE-style MCQs when prompted specifically:

"Write three USMLE Step 1-style multiple choice questions on [topic]. For each question, include a clinical vignette with age, sex, and presentation, four answer choices with one correct answer, and a detailed explanation of why each answer is correct or incorrect. Make the wrong answers plausible rather than obviously incorrect."

The explanation of incorrect answers is the most valuable part. Understanding why a plausible wrong answer is wrong addresses the specific reasoning errors that produce wrong answers under exam pressure, which is more useful than confirming why the right answer is right.

UWorld remains the gold standard question bank. AI-generated questions serve two specific purposes alongside it:

- Supplementing coverage on topics where UWorld questions feel insufficient for the depth required

- Generating targeted practice on weak areas identified from UWorld performance data rather than working through questions at random

Building a Revision Plan Around Weak Areas

The most common board prep mistake is spending the final weeks reviewing what is already understood rather than addressing identified gaps. AI generates a structured revision schedule when given the time available and the specific weak areas:

"I have six weeks before Step 1. Based on my UWorld performance, my weak areas are renal pathophysiology, endocrine pharmacology, and neuroanatomy. Build a daily revision schedule that allocates proportionally more time to these areas while maintaining coverage of high-yield content across all other systems."

The output is a starting point. Adjust it weekly as practice question performance reveals new gaps or confirms that previously weak areas have been addressed.

Integrating AI into a Daily Study Workflow

The students who benefit most from AI use it consistently as part of a daily routine rather than reaching for it only when stuck. The operational overhead that AI removes: creating flashcards, structuring cases, organising practice material, compounds across a semester when done manually. Building the habit early is what makes the time savings meaningful.

Daily and Weekly Rhythm

A practical routine that keeps the workload manageable across the full academic year:

- After each lecture — paste the key content into AI and generate flashcards immediately while the material is fresh enough to catch output errors. Add cards to Anki the same day rather than batching them at the weekend

- Each morning — complete Anki reviews before new content begins, keeping the daily new card count manageable so the review burden does not compound uncontrollably across the semester

- Once a week — work through two or three clinical cases interactively, using AI to build the differential and explain the physiological mechanisms behind each finding

- Once a month — generate a batch of practice questions on the topics covered that month and use the wrong answer explanations to identify gaps and update the revision plan

Osmosis is worth using alongside this workflow for visual anatomy and physiology content, particularly for processes that benefit from animated explanation rather than text-based encoding.

Building a Study Guide Incrementally

A study guide assembled over the course of an exam block is significantly more useful in the final week before an exam than one built from scratch under time pressure. As each system is studied, the structured notes, case summaries, and high-yield content can be consolidated into a single reference document.

For students moving into research reading alongside their coursework, the guide to understanding dense research papers explains how to use AI to read primary medical literature using the same structured approach applied to clinical cases here.

What AI Cannot Do in Medical Education

Understanding the limits of AI in a medical learning context is as important as knowing how to use it. Three failure modes matter enough to state directly:

Verification Against Authoritative Sources

All AI-generated content must be verified against authoritative sources. A flashcard generated from lecture notes may contain an error from the original material or one introduced by AI. First Aid, Robbins, and standard textbooks remain the reference points for what is clinically true. AI is used for structuring and practice, while textbooks establish factual correctness.

AI Oversimplifies Complex Mechanisms

AI explanations of pathophysiological pathways are often simplified. While this may be sufficient for board-style questions, it can be limiting for understanding atypical presentations, where missing nuance becomes important. Simplified explanations should be treated as entry points, not complete representations of the concept.

AI Cannot Replace Supervised Clinical Training

AI cannot replace supervised clinical training. Clinical reasoning develops through real patient encounters where feedback is immediate and consequences are real. While AI case simulations help build reasoning patterns, they do not provide the clinical exposure required for real-world competency or the expectations assessed in board examinations.

For students moving into broader academic research workflows in medicine, including literature reviews, citation tracking, and thesis structuring, the AI for PhD students guide covers those workflows in full.

Conclusion

The volume of medical school is not going to decrease. What AI changes is the ratio between time spent creating study materials and time spent actually using them. Cards that take two hours to build take twenty minutes. A differential that takes three textbook passes to reason through takes one interactive session. A revision plan that takes a week to organise takes a single prompt.

The learning still happens in the student. AI removes the friction that gets in the way of it.

Frequently Asked Questions

Learn more about how AI can help medical students

More topics you may like

AI for Developers: Code Review, Debugging, Documentation, and Integrated Workflows

Arooj Ishtiaq

AI for Frontend Engineers: Component Generation, Refactoring, and Accessibility

Arooj Ishtiaq

AI for PhD Students: Literature Reviews, Citation Tracking, and Thesis Structuring

Arooj Ishtiaq

AI for Product Managers: How to Use AI for PRDs, Research Synthesis, and Prioritisation?

Arooj Ishtiaq