AI Localization: What It Is, How It Works, and Best Practices

Most teams think they are doing AI localization. But the majority are just running machine translation with a modern label on it.

The gap between the two is not a minor technical distinction. It is the difference between content that converts in a new market and content that quietly confuses the audience it was built for. Getting this right is what separates teams that scale globally from teams that translate globally and wonder why nothing sticks.

This guide covers what AI localization is, how the technology works, where most workflows break down, and how to build a process that produces quality output at scale regardless of team size or budget.

What Is AI Localization?

AI localization is the use of artificial intelligence, specifically large language models (LLMs), to adapt content for specific languages, cultures, and markets. It goes beyond converting words from one language to another. It accounts for tone, cultural context, brand voice, regional conventions, and the practical constraints of where content will appear.

The scope is broader than most teams initially assume. AI localization covers:

- Website copy and landing pages

- Product UI strings and in-app messaging

- Metadata, title tags, and alt text

- Marketing content and ad copy

- Support documentation and FAQs

- Legal disclaimers and compliance content

It is not a single tool. It is an intelligence layer applied across the entire localization workflow.

How AI Localization Differs from Traditional Localization

Traditional localization relies entirely on human translators and localization experts who understand both the source and target language and culture. The output quality is high. The speed is slow and the cost scales linearly with volume.

AI localization inverts that equation. It handles volume and context instantly and at a fraction of the per-word cost. Human experts shift from doing the translation to reviewing and refining it, which means their time is spent on judgment calls rather than first-pass conversion.

The result is AI handling the parts of localization that do not require cultural judgment, so humans can focus entirely on the parts that do.

From Rule-Based Machine Translation to LLMs

Early MT systems operated on rigid linguistic rules. They looked up words in a dictionary, applied grammatical substitution, and produced output that was technically composed of real words but frequently made no sense in context.

Imagine you ask someone to “spill the beans” and it gets translated to exactly that in the other language.

Statistical machine translation, which powered early versions of Google Translate, improved on this by learning patterns from large bilingual datasets. It was better, but still fundamentally context-blind. It translated strings, not meaning.

Large language models changed the architecture entirely. LLMs are trained on vast multilingual datasets and process entire paragraphs at once rather than individual strings. They understand how language works in context, not just how words map to each other. That is the foundation that makes modern AI localization meaningfully different from what came before it.

How AI Localization Works

Understanding the technology is not about knowing the engineering. It is about knowing what inputs the system needs to produce reliable output, and where human judgment still needs to sit in the loop.

How Large Language Models Power AI Translation

LLMs and AI chat models are trained on enormous multilingual datasets and process language at the level of full sentences and paragraphs rather than individual words. They do not look up translations. They understand meaning in context and generate output accordingly.

The practical implication is significant. An LLM can be told, in plain language, to translate a piece of marketing copy in a casual and conversational tone for a Brazilian audience that uses informal address.

It understands and executes that instruction in a way that no rule-based translation system could.

LLMs also improve with use. When trained on your brand's existing content, approved translations, and terminology, they produce output that becomes more consistent and more on-brand over time.

Why Glossaries and Style Guides Are Critical AI Inputs

An LLM without structured inputs will produce translations that are linguistically correct but brand-inconsistent. If your product refers to users as "members" rather than "customers," an LLM without a glossary will choose whatever term appears most frequently in its training data, not the one your brand uses.

Two documents determine the quality of your AI localization output before a single word is translated:

- A glossary that defines how brand-specific terms, product names, and industry jargon should be handled across every language

- A style guide that defines tone, formality level, and voice for each target market

Together, they are the inputs that make AI localization controllable rather than unpredictable. Teams that skip this step spend more on human correction than they saved on AI translation.

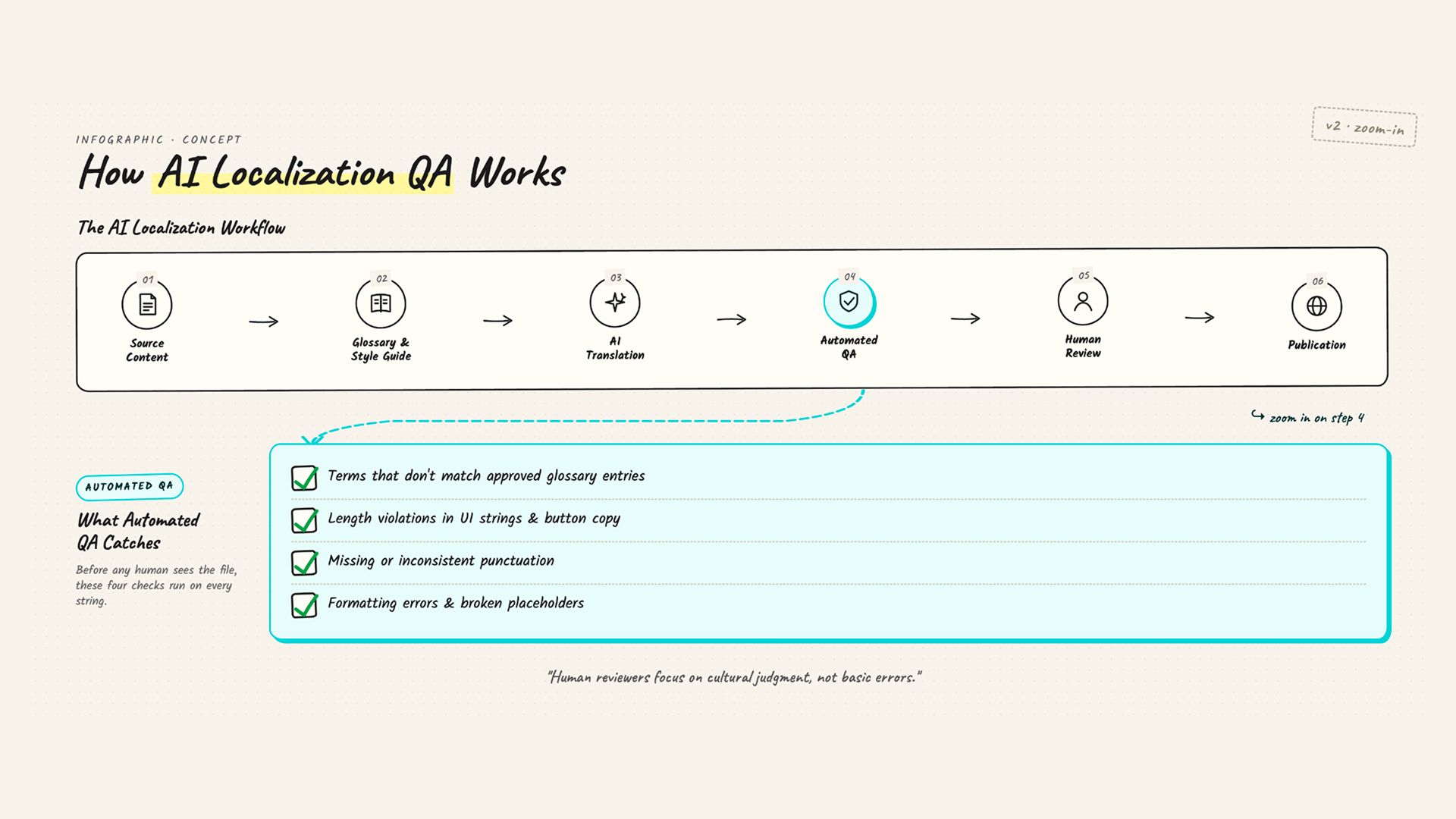

How Automated Quality Assurance Speeds Up the Review Process

Modern AI localization platforms include automated QA that runs before a human reviewer ever sees the output. It checks translations against the glossary and catches issues that would otherwise slow down human review significantly:

- Terms that do not match approved glossary entries

- Length violations in UI strings and button copy

- Missing or inconsistent punctuation

- Formatting errors and broken placeholders

This pre-screening step is what makes the hybrid model fast. Human reviewers are not correcting basic errors. They are evaluating cultural judgment, brand nuance, and context, which is exactly where human expertise is irreplaceable.

The Business Case for AI Localization: Cost, Speed, and Scale

The business case for AI localization is not theoretical. It is measurable at every stage of the workflow.

- Cost: Professional human translation averages $0.22 per word in the US market. For a 10,000-word product suite localized into five languages, that is $11,000 before revisions. AI localization reduces per-word costs by 60 to 80% on average.

- Speed: A traditional localization workflow for a significant product update across five languages can take three to six weeks depending on translator availability and revision cycles. AI localization handles the same volume in hours.

- Scale: AI localization does not degrade as content volume increases. A lean team can localize into fifteen languages simultaneously with the right tooling. That is operationally impossible with a human-only workflow regardless of budget.

For teams beginning their first language expansion, Chatly's English to Spanish Translator offers an accessible entry point to AI-powered translation before committing to full localization infrastructure.

Common Misconceptions About AI Localization

The fastest way to under-invest or over-invest in AI localization is to hold one of these three beliefs without examining them.

1. AI localization replaces human translators

It does not. It repositions them.

Human translators using AI localization tools are producing more output, catching more nuanced errors, and spending their time on work that actually requires cultural fluency.

Teams that frame AI localization as a replacement strategy end up with content that has no human review layer and find out the cost of that gap when something lands badly in a market.

2. AI localization is error-free

It is not. LLMs hallucinate, misread idioms, and produce inconsistent terminology when glossary inputs are weak or absent. The hybrid model, AI translation followed by human review calibrated to content risk, is not optional. It is what makes AI localization reliable rather than merely fast.

3. AI localization only handles simple text

Modern LLMs handle marketing copy, legal content, product UI, support documentation, and video transcription. The scope has expanded considerably as model quality has improved. The limitation is not content complexity.

It is the quality of the inputs and the review process behind them.

Common AI Localization Challenges and How to Solve Them

While AI localization removes several shortcomings of MT, it is not without its challenges.

1. Low-resource languages

LLMs are trained primarily on high-volume languages. Less commonly spoken languages receive less training data, which produces lower-quality raw output.

Apply stricter human review standards to low-resource language outputs and require mandatory in-market review before publication.

2. Missing context

A UI string inside a mobile button carries entirely different constraints than the same phrase in a paragraph. Without context, AI tools make layout-breaking decisions and tonally inconsistent choices. Providing the following alongside every string sent for translation makes a measurable difference:

- Screenshots showing where the content appears

- Character limits for UI-constrained copy

- Usage notes explaining the purpose of each string

3. Brand voice consistency across markets

An AI tool without a style guide produces translations that sound technically correct but tonally fractured across markets. Build the style guide before any translation begins and upload it as a standing input to the AI tool.

4. Integration gaps

AI localization delivers its speed advantage only when it connects directly to your CMS, code repository, or design system. Manual file export and import eliminates most of the efficiency gain. Prioritize tools with native integrations or API access from the start.

Once AI translation is complete, Chatly's AI Humanizer adds a post-translation refinement layer that addresses tone and fluency before content reaches final human review.

AI Localization Best Practices for Teams of Any Size

Every tool needs to be used smartly and efficiently to get your money’s worth. LLMs might be smarter, but if you make any mistake, token consumption can be high. So be precise and follow these best practices.

1. Build the localization kit before the first translation

A glossary, style guide, and tone notes are not documentation to produce after the workflow is running. They are the inputs that determine whether the workflow produces reliable output. Teams that skip this step spend more correcting AI output than they spent on translation.

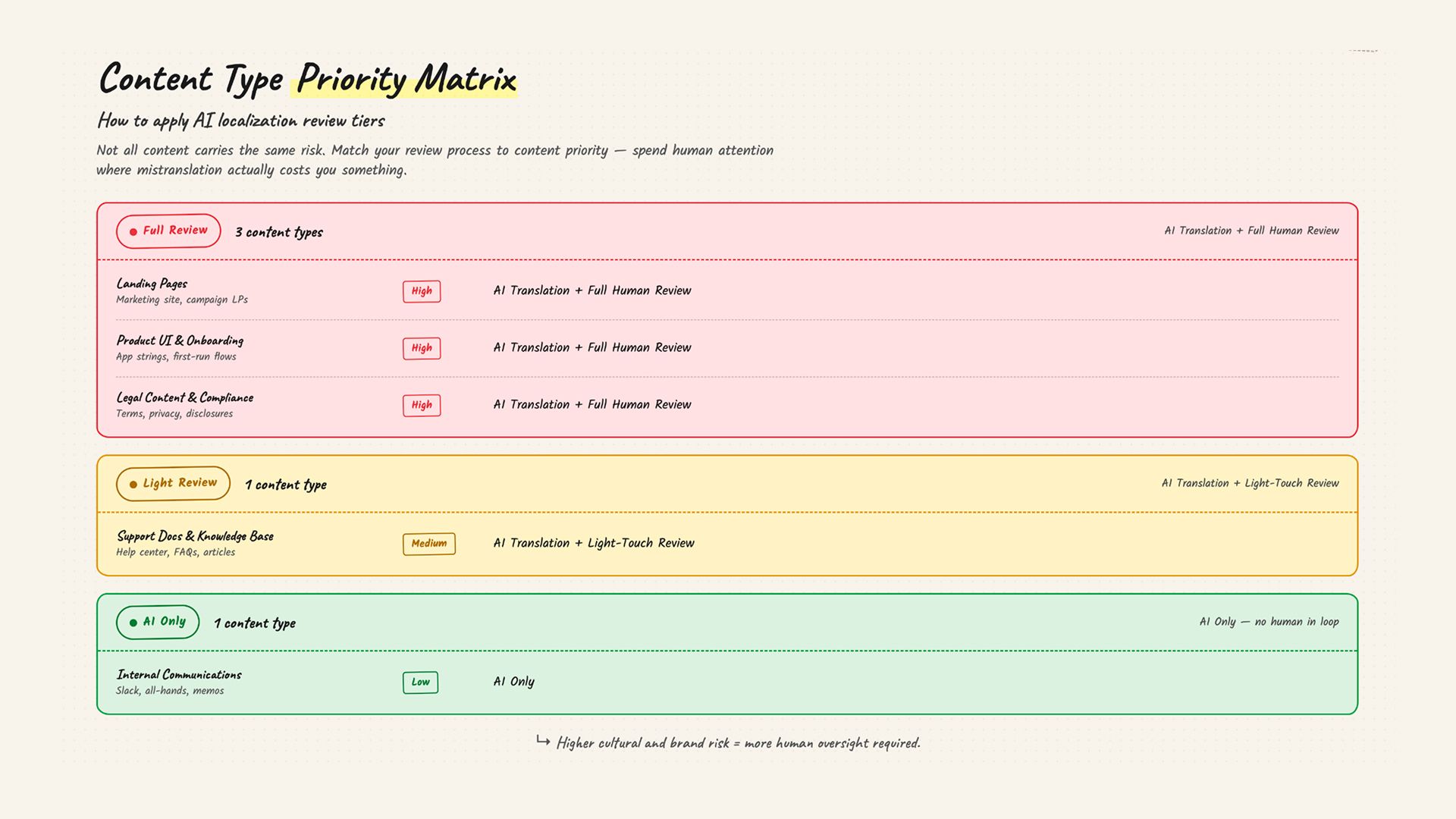

2. Use the hybrid model with content-based review tiers

Not all content carries the same risk. Structure your review process around content priority:

- High-converting landing pages and product onboarding flows: full human review

- Marketing copy and brand-critical content: full human review

- Support documentation and knowledge base articles: light-touch review

- Internal communications: AI-only, no review required

3. Segment content by complexity before sending it for translation

Slogans, legal copy, and product naming carry higher cultural risk than FAQ answers. Identify content risk before translation begins, not after a problematic translation surfaces in a live market.

4. Connect your tools

AI localization is only as fast as the workflow it sits inside. Standalone tools that require manual file management eliminate the speed advantage that justifies the investment. Native integrations with your CMS or content pipeline are not a nice-to-have.

Tools like Chatly allow you to connect your chat to Slack, Notion, and Google Drive. It also comes with the ability to add Sources which can be activated at any time for personalized results.

5. Measure per market, not globally

Once localized content is live, track these metrics separately for each language version:

- Organic traffic from local search

- Conversion rate on localized pages

- Bounce rate and time on page

A single blended global metric hides what is working, what is not, and where investment should go next.

For teams repurposing existing high-performing content for new markets rather than starting from scratch, Chatly's Paraphrasing and Rewording Tool helps adapt tone and phrasing without rebuilding content from zero.

Why AI Localization Without SEO Is Only Half the Job

This is the gap none of the major AI localization guides address. AI localization produces content at scale. But if that content is not optimized for local search behavior, it will not reach the audience it was built for.

Translated content and locally optimized content are not the same thing.

One is linguistically accurate. The other is built around what local audiences actually search for, how they phrase their queries, and what intent sits behind those searches. The distinction determines whether localized content generates organic traffic or simply exists in another language.

1. Keyword research in the target language cannot be automated

AI localization tools translate your existing keywords. They cannot tell you what local audiences are actually searching for.

A keyword that drives significant search volume in English may have near-zero volume in its direct translation, or carry a completely different intent in the target market. Local keyword research is a manual step that must happen before content is localized, not after.

2. Every on-page element needs localization, not just body copy

These elements are routinely left in English when body copy is translated:

- Title tags and meta descriptions

- Image alt text and file names

- CTAs and button copy

- Navigation labels and breadcrumbs

Each one affects how search engines index the page and how local users decide whether to click. A perfectly translated page with an English meta description is invisible to a local audience browsing search results.

3. Technical SEO does not localize itself

Hreflang tags tell search engines which language version to serve to which user. Incorrect or missing hreflang is one of the leading causes of international SEO failure.

URL structure and canonical configuration require deliberate setup. AI translation tools do not touch these. A beautifully localized page with broken hreflang is effectively invisible to the right audience in organic search.

For teams targeting high-growth search markets, Chatly's English to Hindi Translator, English to Urdu Translator, and English to Japanese Translator provide an accessible foundation for market entry before investing in full SEO infrastructure.

For a deeper look at building a content strategy that works across international markets, the guide on how to localize content for international markets covers the broader localization strategy layer that sits above the AI workflow.

Start AI Localization Without Enterprise-Level Infrastructure

The tools and workflows described in this guide are not reserved for businesses with dedicated localization teams and enterprise software budgets. The fundamentals are accessible at any scale, and the starting point is simpler than most teams expect.

- Pick one market where you already have a demand signal, whether that is organic traffic, signups, or direct inquiries from non-English speakers

- Localize your highest-converting page first.

- Build the glossary and style guide before that first translation goes out, even if it is a single page that defines tone, key terminology, and brand voice.

- Choose tools like Chatly that connect to your existing content workflow.

The efficiency gain from AI localization disappears quickly if every localization cycle involves manual file exports and imports. You do not need a full localization team to apply human review well. A native speaker who understands your product reviewing your most critical pages before they go live is sufficient.

Everything else can move through AI with lighter oversight calibrated to content risk.

Frequently Asked Questions

Here are the most common questions related to AI localization.

More topics you may like