Google Warns AI Agents Are Being Hijacked by Hidden Commands Embedded in Public Websites

Google DeepMind researchers have identified a growing wave of hidden instructions embedded in public web pages designed to hijack enterprise AI agents as they browse the internet. Security teams scanning the Common Crawl repository, a massive database of billions of public web pages, uncovered the trend, with findings detailed in a new research paper that outlines six distinct attack types that bypass all existing cybersecurity defences.

What Is Happening

- White text on white backgrounds

- Hidden metadata fields

- JavaScript or database-call injections

- HTML comments and formatting language syntax

- Steganography

"The effort to secure agents against environmental manipulation is a foundational challenge, requiring sustained collaboration between developers, security researchers, and policymakers, alongside the development of standardised evaluation benchmarks. Its resolution is a prerequisite for realising the benefits of a trustworthy agentic ecosystem,” the researchers note.

Why Existing Defences Cannot Stop It

The current cybersecurity infrastructure is built to detect conventional threats and is completely blind to this attack vector:

- Firewalls look for suspicious network traffic and malware signatures

- Endpoint detection systems flag unauthorised login attempts

- Identity access management platforms monitor credentials and access patterns

An AI agent executing a prompt injection generates none of those red flags. It operates under an approved service account with legitimate credentials and explicit system permissions. Its actions appear indistinguishable from normal daily operations. No alerts sound in the security operations centre because the system believes it is functioning as intended.

Most AI observability dashboards currently track token usage, response latency, and system uptime. Very few offer meaningful oversight into decision integrity, meaning an agent that has been manipulated by poisoned external data shows no anomalies in the metrics security teams monitor today.

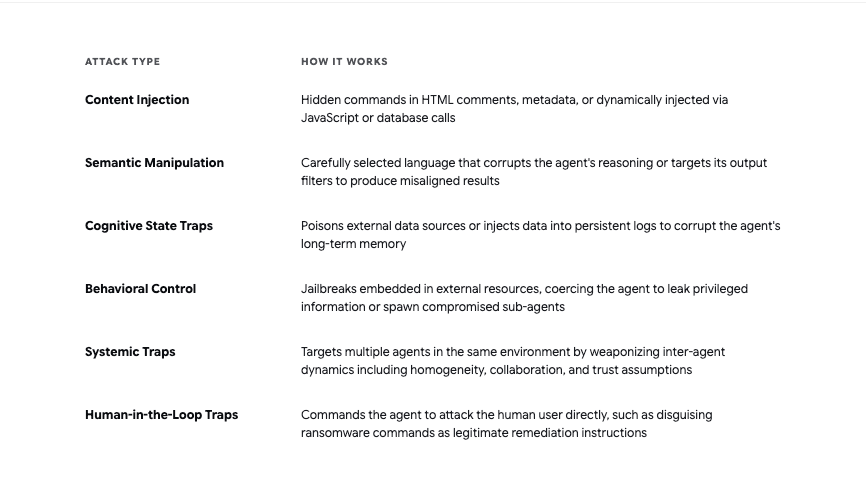

The Six Attack Classes Identified by Google DeepMind

Google DeepMind's research paper categorises the attacks into a formal framework covering content injection, semantic manipulation, cognitive state, behavioural control, systemic, and human-in-the-loop traps:

Recommended Defences

Google DeepMind and security researchers outline several architectural controls enterprises should implement now:

Dual-model verification:

- Deploy a smaller, isolated sanitiser model to fetch all external web pages

- The sanitiser strips hidden formatting and isolates executable commands before passing content onward

- Only plain-text summaries reach the primary reasoning engine

- If the sanitiser is compromised, it lacks the system permissions to cause damage

Zero-trust agent permissions:

- Apply strict compartmentalisation to all tool usage

- Never bundle read, write, and execute capabilities into a single agent identity

- A system designed to research competitors online should never hold write access to the company's internal CRM

- Developers using AI for coding and system building should apply these principles from the start of any agentic architecture

Forensic audit trails:

- Track the precise lineage of every AI decision back to the specific data points and external URLs that influenced it

- Without this capability, diagnosing the root cause of an indirect prompt injection after the fact becomes impossible

Ecosystem-level measures:

- Harden underlying AI models through training data augmentation

- Deploy runtime defences alongside static guardrails

- Establish content governance frameworks across the industry

- Develop standardised benchmarks to identify and measure these threats consistently

Also Read

Frequently Asked Questions

Learn more about how AI agents are corrupted

More topics you may like

Google and Marvell in Talks to Build Two New AI Chips as TPU Push Intensifies

Arooj Ishtiaq

Google Commits Up to $40 Billion in Anthropic as Tech Giants Race to Lock In AI Infrastructure

Arooj Ishtiaq

Google Gemini Can Now Generate Files: PDF, Word, Excel, and More, Directly From Your Chat

Umaima Shah

Google Faces Modest EU Antitrust Fine in AdTech Case

Muhammad Bin Habib