What Is GPT-5.1? A Complete Breakdown of Features and Benchmarks

OpenAI started it all. With ChatGPT, they provided a roadmap for others to follow to advance towards Artificial General intelligence (AGI).

While countless other competitors have jumped into the arena, OpenAI continues to dominate the race with relentless innovation and aggressive marketing.

And they have done it again. Just three months after releasing GPT-5 in August 2025, the company unveiled GPT-5.1 in November 2025, promising a warmer, smarter, and more adaptive AI experience.

But is this iterative update worth the hype, or is it just another incremental release?

In this comprehensive breakdown, we'll explore everything you need to know about GPT-5.1, from its new features and pricing to how it compares with its predecessor and competitors.

What Is GPT-5.1? Understanding the Basics

GPT-5.1 represents an iterative refinement rather than a revolutionary leap forward.

Think of it as your new iPhone. It has the same core architecture, but with meaningful improvements in usability, tone, and efficiency making the user experience that much more smoother.

The model comes in two primary variants that cater to different use cases:

- GPT-5.1 Instant: Designed for everyday conversations and tasks where speed matters. It features a warmer, more conversational tone and includes adaptive reasoning that dynamically adjusts thinking time based on query complexity.

- GPT-5.1 Thinking: Tackles complex reasoning problems that require deeper analysis. Interestingly, while it's slower on hard problems (spending more time thinking through solutions), it's actually faster on simple queries compared to its predecessor.

While older models also had similar variants, users had to manually switch between them depending on their needs. That’s where GPT-5.1 offers a significant improvement.

OpenAI introduced GPT-5.1 Auto, which intelligently routes queries between Instant and Thinking modes based on the task requirements. This smart routing ensures users get optimal performance without manually selecting modes.

GPT-5.1 Release Timeline and Availability

GPT-5’s features and capabilities were impressive but it left a lot of room for improvement.

GPT-5.1 officially launched on November 12, 2025, for ChatGPT users, with API access following on November 13, 2025. OpenAI adopted a phased rollout strategy, prioritizing paid subscribers (Plus, Pro, and Team plans) before gradually expanding to free-tier users.

Enterprise and Education customers received early access during a preview period, allowing organizations to test the model before widespread deployment. For users still relying on GPT-5, OpenAI announced a three-month transition window where the legacy model would remain accessible, giving developers time to migrate their applications.

GPT-5.1 Instant vs Thinking Mode: How Do They Differ?

While GPT-5.1’s intelligent Auto mode chooses the suitable variant for you, we understand some people just like to be in control of what they generate.

For those people, understanding when to use each mode is crucial for getting the most out of GPT-5.1. The distinction between these modes isn’t just about speed but different approaches to problem-solving as well.

GPT-5.1 Instant

GPT-5.1 Instant serves as ChatGPT's most-used model and default choice for everyday conversations. What makes it revolutionary is its adaptive reasoning capability. For the first time, a non-Thinking model can dynamically decide when to engage deeper analysis before responding.

Let’s see how it achieves that.

When you ask a simple question like "What's the weather forecast?" the model responds almost instantly with minimal computational overhead. But when you pose a more challenging query like "Design a database schema for a multi-tenant SaaS application with complex permission hierarchies," the model automatically allocates more thinking time, spending perhaps 5-10 seconds processing before responding with a thorough, well-reasoned answer.

This adaptive behavior means you get:

- Speed where it matters: Simple queries get immediate responses without unnecessary latency

- Depth when needed: Complex problems receive careful analysis automatically

- No manual switching: The model intelligently adjusts to your query's demands

- Token efficiency: Lower costs since simple queries don't consume reasoning tokens

The performance improvements are measurable.

GPT-5.1 Instant shows significant gains on technical benchmarks like AIME 2025 (advanced mathematics) and Codeforces (competitive programming), demonstrating that its adaptive reasoning genuinely enhances problem-solving capability rather than just adding delays.

Best use cases for Instant mode:

- Email composition and professional communication

- Content creation and copywriting

- Brainstorming and creative ideation

- Code explanation and basic debugging

- Research assistance and information synthesis

- Customer support automation

- Educational tutoring with adaptive difficulty

- Data analysis with mixed complexity

- General conversation and productivity tasks

GPT-5.1 Thinking

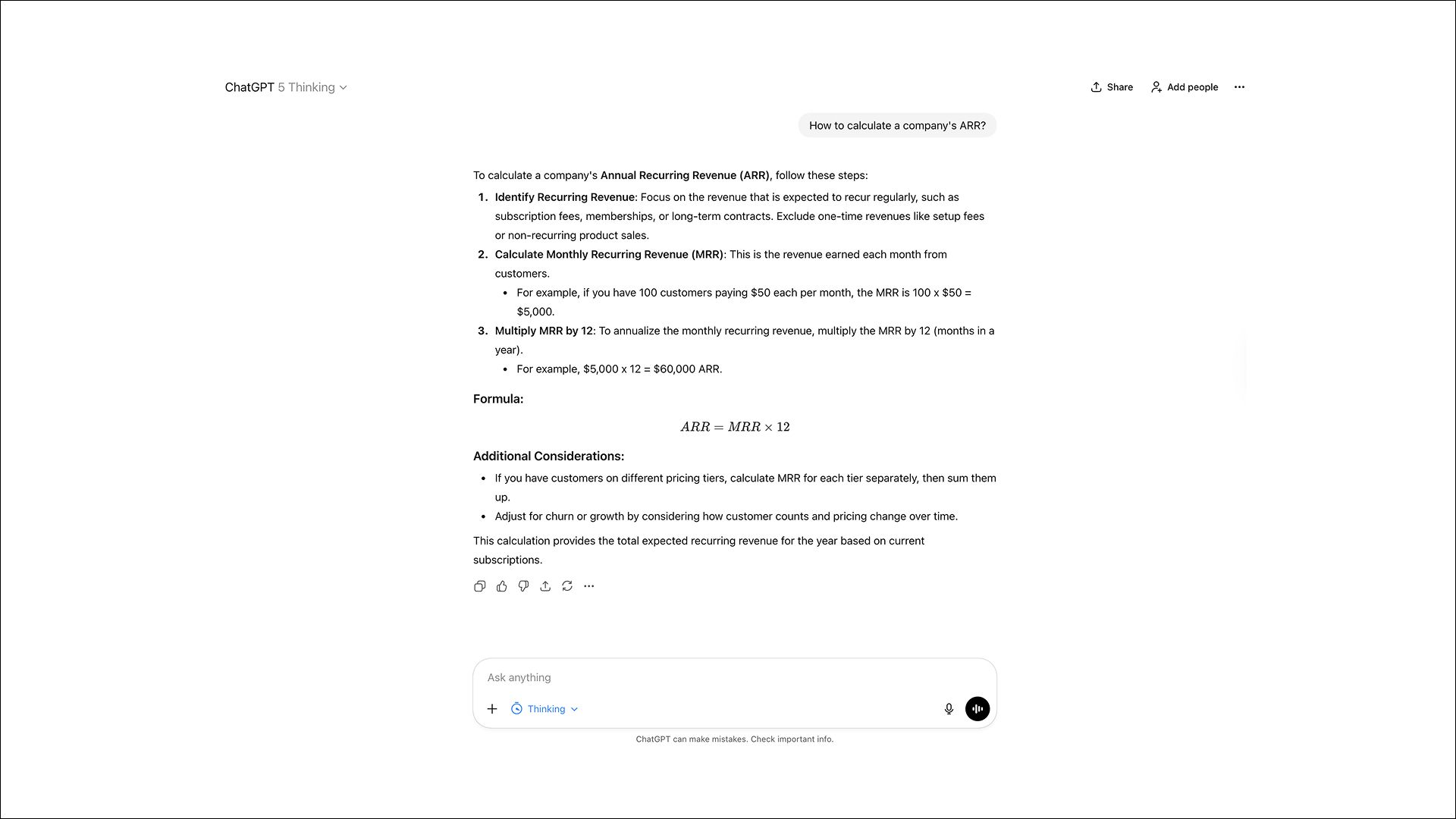

GPT-5.1 Thinking represents OpenAI's advanced reasoning engine, designed specifically for problems that demand systematic, multi-step analysis. Unlike GPT-5 Thinking, which applied relatively uniform reasoning time across queries, GPT-5.1 Thinking dynamically adjusts its cognitive effort with remarkable precision.

On a representative distribution of ChatGPT tasks with Standard thinking time enabled, the model demonstrates intelligent resource allocation:

- Approximately 2x faster on the easiest tasks compared to GPT-5 Thinking

- Approximately 2x slower on the most difficult tasks compared to GPT-5 Thinking

The model recognizes when a query truly requires extended reasoning and when it doesn't, preventing wasted time on problems that don't benefit from prolonged analysis.

Beyond speed optimization, GPT-5.1 Thinking offers dramatically improved clarity and accessibility. Responses use significantly less jargon and define technical terms more consistently, making the most capable reasoning model actually understandable for non-experts.

This addresses one of GPT-5 Thinking's biggest complaints: technically correct but unnecessarily convoluted explanations.

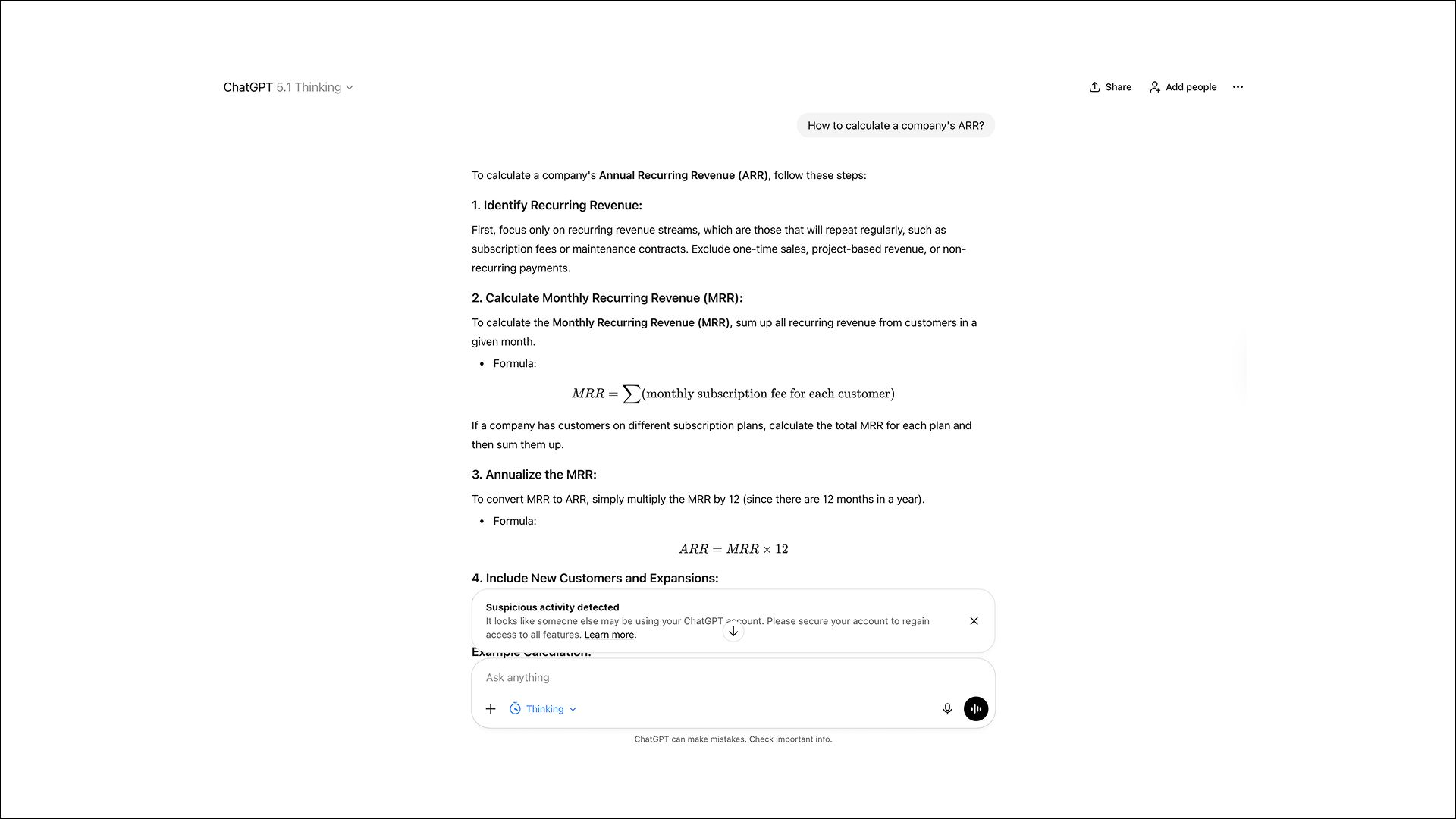

When you select GPT-5.1 Thinking in ChatGPT, you'll see a condensed view of the model's "chain of thought" as it works through the problem.

Best use cases for Thinking mode:

- Advanced mathematics and formal proofs

- Complex algorithm design and optimization

- Scientific research planning and analysis

- Strategic business planning with multiple constraints

- Legal document analysis and contract review

- Multi-step debugging of intricate code issues

- Financial modeling and risk assessment

- Architectural design for large software systems

- Competitive programming challenges

- Academic research synthesis across multiple sources

GPT-5.1 Auto

For most users, GPT-5.1 Auto eliminates decision paralysis entirely. This smart routing system analyzes each query and automatically directs it to whichever model variant is best suited for the task.

Simple queries like "summarize this email" or "what's a good recipe for chicken" route to Instant for fast responses. More complex requests like "debug this concurrent race condition" or "analyze quarterly trends across five datasets" get routed to Thinking for deeper analysis.

The routing happens transparently in the background, balancing speed, quality, and computational cost without requiring you to understand the technical distinctions. You simply ask your question, and the system ensures optimal handling.

Key Features and Improvements Over GPT-5

So what exactly makes GPT-5.1 better than GPT-5? The improvements span several interconnected areas that collectively transform the user experience, even if no single change feels revolutionary on its own.

1. Enhanced Communication Style

The most immediately noticeable change is GPT-5.1's warmer, more conversational default tone.

This improvement was expected because users were not happy. On Reddit, people went as far as calling GPT-5 “a mess” and “bland and boring”.OpenAI explicitly acknowledged user feedback and made improvements.

GPT-5.1 addresses this through refined training that makes responses feel more natural and engaging without sacrificing professionalism or accuracy.

Based on early testing, users consistently report being "surprised by its playfulness while remaining clear and useful." The model strikes a better balance between being helpful and being personable, making multi-turn conversations feel less like interrogating a database and more like collaborating with a knowledgeable colleague.

This wasn’t just a change in the word choice. But deeper changes in how the model structures responses, uses informal language appropriately, and maintains conversational context across turns.

2. Dramatically Improved Instruction Following

One of GPT-5's most frustrating limitations was its tendency to ignore specific constraints, drift into tangents, or misinterpret the actual question being asked. GPT-5.1 delivers substantially more reliable constraint adherence.

Real-world testing demonstrates this improvement:

Constraint Test Example:

- Prompt: "Write a product description for noise-canceling headphones in exactly 50 words."

- GPT-5 Result: 49 words with overly dramatic tone

- GPT-5.1 Result: Exactly 50 words with appropriate product description tone

This enhanced instruction following extends to complex, multi-layered constraints:

- Word counts and sentence limits: The model consistently honors exact specifications

- Formatting requirements: Structure, bullet points, headers appear as requested

- Tone specifications: Professional, casual, technical (whatever you specify)

- Content restrictions: Avoiding certain topics or phrases works reliably

- Output format: JSON, markdown, specific citation styles all handled correctly

The improvement stems from refined training that helps the model better identify and prioritize explicit constraints in prompts, reducing the need for iterative prompt engineering to achieve desired outputs.

3. Adaptive Reasoning

Adaptive reasoning represents GPT-5.1's most technically sophisticated advancement. Both Instant and Thinking modes now dynamically adjust their reasoning depth based on task complexity, rather than applying fixed computational budgets regardless of difficulty.

For GPT-5.1 Instant:

- Simple queries process with minimal internal reasoning tokens, delivering near-instant responses

- As query complexity increases (detected through linguistic patterns, domain indicators, and structural analysis), the model automatically allocates additional reasoning tokens

- This happens transparently and you just see slightly longer response times for harder questions

- The system avoids both under-thinking (missing nuances on hard problems) and over-thinking (wasting time on simple queries)

For GPT-5.1 Thinking:

- The model more precisely calibrates thinking time to match actual problem difficulty

- On easy tasks: ~2x faster than GPT-5 Thinking (avoiding unnecessary deep reasoning)

- On hard tasks: ~2x slower than GPT-5 Thinking (spending more time on genuine challenges)

- This dynamic allocation means the model recognizes when extended reasoning genuinely helps versus when it's just burning compute cycles

The impact extends beyond speed. Adaptive reasoning improves accuracy on benchmarks like AIME 2025 (advanced mathematics) and Codeforces (competitive programming) because the model dedicates appropriate cognitive resources to problems that warrant them.

4. Clearer Explanations with Less Jargon

Both GPT-5.1 variants, especially Thinking mode, produce significantly clearer explanations compared to their predecessors. The models use less unnecessary jargon, define technical terms more consistently, and structure explanations more accessibly.

This improvement addresses a common complaint about GPT-5 Thinking: while technically correct, its explanations often felt verbose, academically dense, and full of undefined terminology. GPT-5.1 maintains technical accuracy while making explanations approachable for non-experts.

For example, when explaining a technical concept:

- GPT-5: Might use terms like "idempotent operations" or "eventual consistency" without context

- GPT-5.1: Defines such terms inline or uses simpler analogies first, then introduces technical vocabulary

This makes GPT-5.1 particularly valuable for educational applications, technical documentation aimed at diverse audiences, and workplace tasks where clarity matters more than demonstrating expertise.

5. Token Efficiency: Same Capability, Lower Cost

Perhaps the most underrated improvement is the 30-50% reduction in token usage for similar tasks across both modes. This efficiency gain means:

For API users:

- Significantly lower costs at scale (pricing remains $1.25 per million input tokens, $10 per million output tokens)

- More queries fit within rate limits and budget constraints

- Better cost predictability for production applications

For ChatGPT users:

- Longer effective context windows (more conversation history fits in the same token budget)

- More queries available within free tier limits

- Faster response generation (fewer tokens to process)

The efficiency comes from improved model architecture that generates more concise responses without sacrificing information density or completeness. The model learned to avoid repetitive phrasing, unnecessary hedging, and verbose explanations that don't add value.

6. Multimodal and Context Improvements

While less publicized, GPT-5.1 includes several multimodal enhancements:

- Better image understanding: Improved facial consistency in image edits and more accurate visual analysis

- Enhanced file processing: More reliable extraction and interpretation of PDFs, spreadsheets, and documents

- Improved context handling: Better maintenance of information across multi-turn conversations

- Stronger memory integration: Custom instructions and saved preferences apply more consistently

These improvements make GPT-5.1 more reliable for complex workflows involving multiple data types and extended interactions.

7. Reduced Errors and Hallucinations

OpenAI's system card addendum for GPT-5.1 highlights improved factual reliability and reduced overconfidence. The model is better calibrated to:

- Admit uncertainty when appropriate rather than fabricating confident-sounding but incorrect information

- Cite sources more appropriately in research tasks

- Maintain factual consistency across long conversations

- Recognize when queries require current information beyond its training data

While hallucinations haven't been eliminated entirely (no language model has achieved this), the frequency and severity of factual errors have decreased measurably compared to GPT-5.

New Personality Presets in GPT-5.1

One of GPT-5.1's most talked-about features is the introduction of eight distinct personality presets:

- Default

- Professional

- Candid

- Quirky

- Friendly

- Efficient

- Nerdy

- Cynical

These presets allow users to customize how ChatGPT communicates beyond just accuracy. Want brief, to-the-point answers for work? Choose Efficient. Need a more engaging companion for creative brainstorming? Try Quirky or Friendly. Prefer straightforward, no-nonsense responses? Candid might be your style.

Beyond presets, users can fine-tune additional parameters including conciseness level, warmth, scanability (how easy responses are to skim), and emoji usage. The system even learns from your feedback, proactively updating preferences as it learns what you prefer during conversations.

While some critics dismiss this as gimmicky, many users find that matching the AI's tone to their workflow significantly improves the overall experience.

GPT-5.1 Benchmark Performance and Real-World Results

Benchmark performance of AI models is crucial to its success. And GPT-5.1’s benchmark performance is impressive.

GPT-5.1 delivers strong benchmark gains on key evaluations:

- AIME 2025 at 94% accuracy

- SWE-bench Verified at 76.3%, and competitive coding via LiveCodeBench where it ranks among top performers

- Compared to GPT-5's 72.8% on SWE-bench Verified, this marks a 4.8 percentage point improvement, resolving 381 of 500 real GitHub issues autonomously.

However, independent analysis from researchers presents a more nuanced picture.

Some benchmarks show minimal improvement over GPT-5, suggesting that gains are task-specific rather than universal. GPT-5.1 Thinking is approximately twice as fast on easy tasks but twice as slow on complex problems compared to GPT-5's thinking mode.

Real-world testimonials from partners like Balyasny Asset Management, Pace University, and development tools like Cline and CodeRabbit paint a positive picture, with users reporting better code quality, more natural interactions, and improved productivity.

GPT-5.1 Codex and Codex-Max: The Agentic Coding Revolution

For developers, GPT-5.1's Codex variants represent the most exciting advancement. OpenAI introduced three specialized coding models:

- GPT-5.1-Codex is the standard variant optimized for code generation and understanding.

- GPT-5.1-Codex-Mini offers a lightweight alternative for simpler coding tasks with faster response times.

- GPT-5.1-Codex-Max, described as OpenAI's frontier agentic coding model. It leverages "compaction technology" that allows it to work effectively across millions of tokens, enabling it to understand and modify entire codebases rather than just individual files.

Codex-Max excels at project-scale operations including multi-hour agent loops, full codebase refactors, and complex architectural changes. It integrates seamlessly with the Codex CLI, popular IDE extensions, and even GitHub Copilot.

Two new tools enhance its capabilities: the apply_patch command for surgical code modifications and the shell command for executing terminal operations directly, making it a truly autonomous development assistant.

How to Use Adaptive Reasoning in GPT-5.1

Adaptive reasoning is controlled through the reasoning_effort parameter in the API. Setting it to 'none' completely disables extended thinking, perfect for latency-sensitive applications like chatbots or real-time systems.

When left at default settings, the model automatically determines when to engage deeper reasoning based on the complexity it detects in your query.

The key is understanding your workflow needs.

If you're building an application where speed is critical and queries are straightforward, explicitly setting reasoning_effort to 'none' can reduce latency. For analytical tools or problem-solving applications, leaving adaptive reasoning enabled ensures quality responses even for unexpectedly complex queries.

GPT-5.1 API: Model Names, Pricing, and Implementation

For developers integrating GPT-5.1 into applications, understanding the API structure is essential.

Model names follow a clear pattern:

gpt-5.1accesses Thinking modegpt-5.1-chat-latestprovides Instant modegpt-5.1-codexandgpt-5.1-codex-minioffer specialized coding capabilities

Pricing remains unchanged from GPT-5, which is welcome news:

- Input: $1.25 per million tokens

- Output: $10 per million tokens

OpenAI extended prompt caching to 24 hours, allowing frequently used context to be cached and reused, significantly reducing costs for applications with repeated prompts. Rate limits depend on your API tier, with higher tiers receiving more generous quotas.

Notably, OpenAI has announced no immediate deprecation plans for the GPT-5 API, giving developers flexibility in migration timelines.

Context Window Size and Token Limits

Context windows vary significantly based on your access tier and chosen interface.

ChatGPT context windows are tier-dependent:

- Free users: 8,000 tokens

- Plus subscribers: 32,000 tokens

- Pro/Business users: 128,000 tokens for Instant mode, 196,000 tokens for Thinking/Pro mode

- Enterprise/Education: 128,000-196,000 tokens depending on configuration

API context windows offer more generous limits:

gpt-5.1-chat-latest: 128,000 token context window- Full API access: 272,000 input tokens plus 128,000 output tokens (400,000 total)

These windows are competitive but still trail behind some competitors like Claude, which offers larger context windows. For most use cases, however, GPT-5.1's limits are more than adequate.

Managing long conversations requires strategies like summarization, conversation pruning, or implementing a memory system that stores key information separately while keeping the active context focused on recent exchanges.

Real-World Applications and Use Cases

GPT-5.1's improvements make it particularly well-suited for several key applications:

- Software Development: The Codex variants excel at code review, debugging, documentation generation, and even autonomous agent-based development workflows.

- Content Creation: The warmer tone and personality presets make GPT-5.1 excellent for writing blog posts, marketing copy, social media content, and creative writing where voice matters.

- Data Analysis: Improved reasoning capabilities help with interpreting complex datasets, generating insights, and creating visualizations from raw data.

- Education: More accessible explanations and adaptive reasoning make GPT-5.1 an effective tutoring tool that adjusts its teaching approach based on student understanding.

- Customer Support: The combination of warm tone, better instruction following, and efficiency improvements creates more satisfying customer interactions.

Limitations and Considerations

Despite its improvements, GPT-5.1 isn't perfect.

ChatGPT's context windows, while adequate, still lag behind competitors like Claude, which can be limiting for extremely long documents or conversations.

Performance improvements on some benchmarks are marginal, raising questions about whether the upgrade justifies switching for all use cases. The personality customization features, while appreciated by many, feel somewhat gimmicky to users who prioritize pure capability over interaction style.

For high-volume API users, costs can accumulate quickly at scale, making it essential to optimize token usage and leverage caching effectively. In some scenarios, GPT-5 or even alternative models might offer better value propositions depending on specific requirements.

Getting Started with GPT-5.1

Enough reading. Start experimenting with GPT 5.1. Here's how to get started:

For ChatGPT users

Simply log into your account and select GPT-5.1 from the model dropdown. Explore the personality presets in settings to find your preferred communication style. Try Auto mode first, then experiment with manually selecting Instant or Thinking modes to understand when each works best.

For API developers

Update your API calls to use the new model names (gpt-5.1 or gpt-5.1-chat-latest). Test your existing prompts to verify behavior, as the improved instruction following might change how the model interprets your requests. Implement prompt caching for repeated context to reduce costs.

Be specific in your prompts, leverage the adaptive reasoning for complex tasks, experiment with personality presets to find optimal workflow fits, and monitor token usage to optimize costs.

Conclusion

GPT-5.1 represents a thoughtful refinement of OpenAI's flagship model rather than a groundbreaking revolution. The improvements—warmer tone, adaptive reasoning, better instruction following, and specialized coding capabilities—address real user feedback and create a noticeably better experience.

For casual users, the enhanced conversational quality and personality options make ChatGPT more pleasant to use daily. For developers, the Codex variants and improved API efficiency offer tangible productivity gains. For businesses, the combination of better performance and unchanged pricing makes GPT-5.1 an easy upgrade.

Is it perfect? No. Does it solve every limitation of GPT-5? Not quite. But it moves in the right direction, and that matters. As AI models mature, these iterative improvements might prove more valuable than raw capability increases.

Whether GPT-5.1 is the right choice for you depends on your specific needs, but for most users and developers, it represents a worthwhile upgrade that makes AI assistance more natural, efficient, and effective._

Frequently Asked Question

Still got questions? Let us answer some of the most common questions from the users.

More topics you may like

11 Best ChatGPT Alternatives in 2026 (Tested, Compared & Priced)

Muhammad Bin Habib

GPT-5.2 Is Here: What Changed, Why It Matters, and Who Should Care

Faisal Saeed

Gemini 3 Pro vs GPT-5.2 vs Claude Opus 4.5: Benchmark Performance Breakdown

Faisal Saeed

Claude Opus 4.5: The Definitive Guide to Features, Use Cases, Pricing

Faisal Saeed

10 Different Ways You Can Use Chatly AI Chat and Search Every Day

Faisal Saeed