We Asked AI to Explain One of the Most Important and Complex Research Papers. Here's What Happened.

Research papers are not easy to read.

Many of them are long, dense, and filled with technical language that assumes years of background knowledge. Even experienced developers sometimes struggle to extract the main ideas from a complex paper.

This creates a simple problem. The knowledge is valuable, but the format makes it difficult to access.

Or at least that how it was before advanced AI tools like Ask AI.

These tools are helpful for students and professionals alike who might lack the skill or the time to go through the paper end-to-end.

So, we set out to test how effective an Ask AI tool can be for these people.

We took one of the most influential AI research papers “Attention Is All You Need “by Vaswani et al. and uploaded it into Ask AI. Then we ran 11 progressively deeper prompts, starting from a basic overview all the way to expert-level analysis.

The paper introduced the Transformer architecture and is dense, technical, and genuinely difficult to read without a background in machine learning.

Our goal was simple: can Ask AI make this paper accessible to someone who isn't a researcher?

Here's exactly what happened, prompt by prompt.

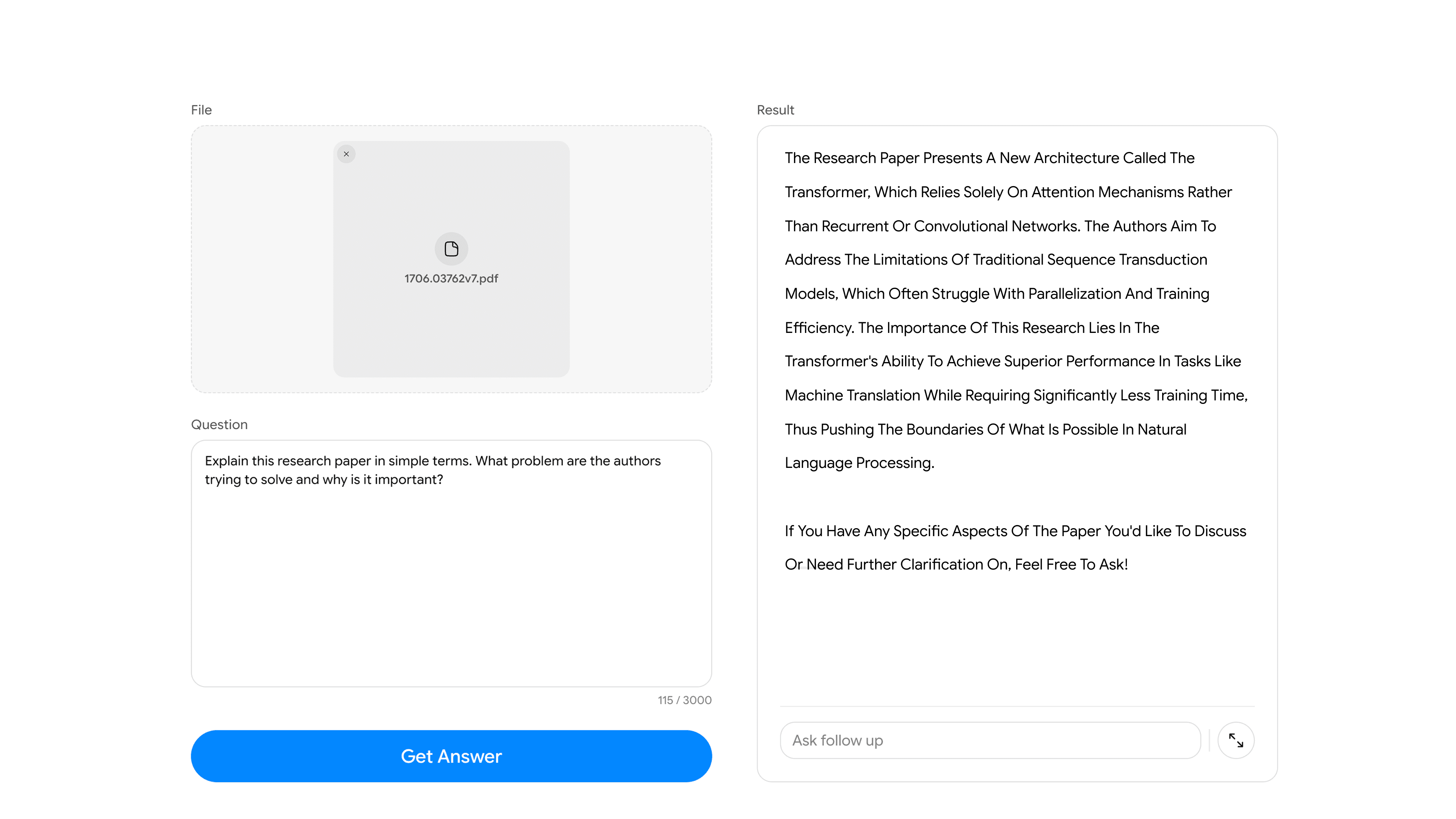

We Uploaded the Paper

We uploaded the PDF directly into Ask AI and gave it no additional context.

No instructions. No framing. Just the paper and a question.

This matters because it tests whether the tool can extract meaning independently. This is the way a student would approach it with no prior knowledge.

First Question

We started with the most basic prompt possible.

Prompt:

Explain this research paper in simple terms. What problem are the authors trying to solve and why is it important?

What Ask AI returned:

What stands out here is how the tool handled the framing of the problem. Rather than jumping straight into technical definitions, it first established the “why” of the paper. The research gap this paper set out to solve. That context is exactly what most readers need before any technical explanation lands properly.

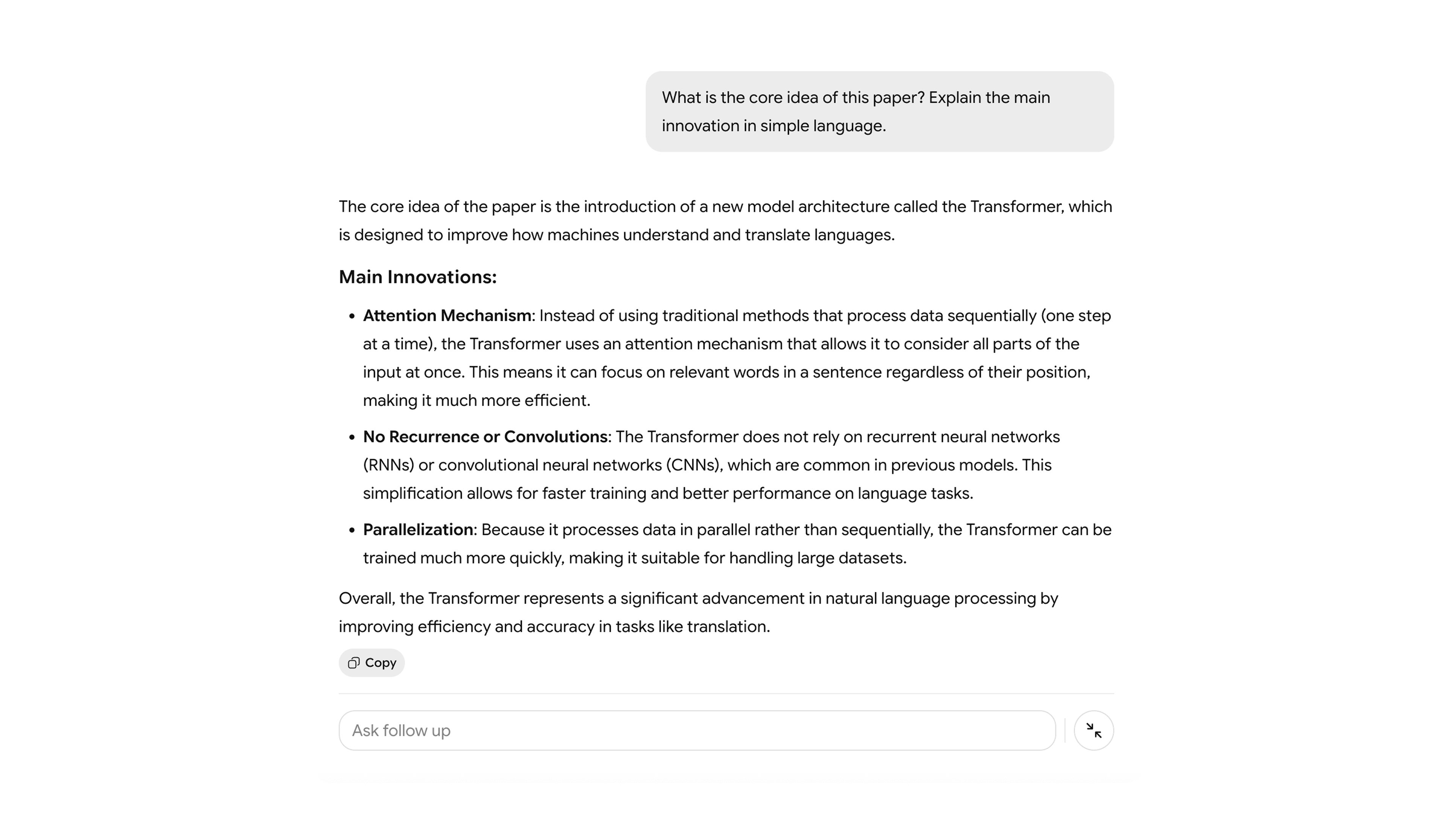

Second Questions

With the overview established, we pushed into the paper's central contribution.

Prompt:

What is the core idea of this paper? Explain the main innovation in simple language.

What Ask AI returned:

This is where Ask AI starts to show real depth. The core innovation here “self-attention” is one of those concepts that sounds simple once someone explains it well, but takes a long time to extract from dense academic prose.

Notice how the tool doesn't just define the term. It explains what problem it solves and why it's a better approach. That distinction between definition and explanation is what makes an Ask AI tool genuinely useful for learning.

Third Question

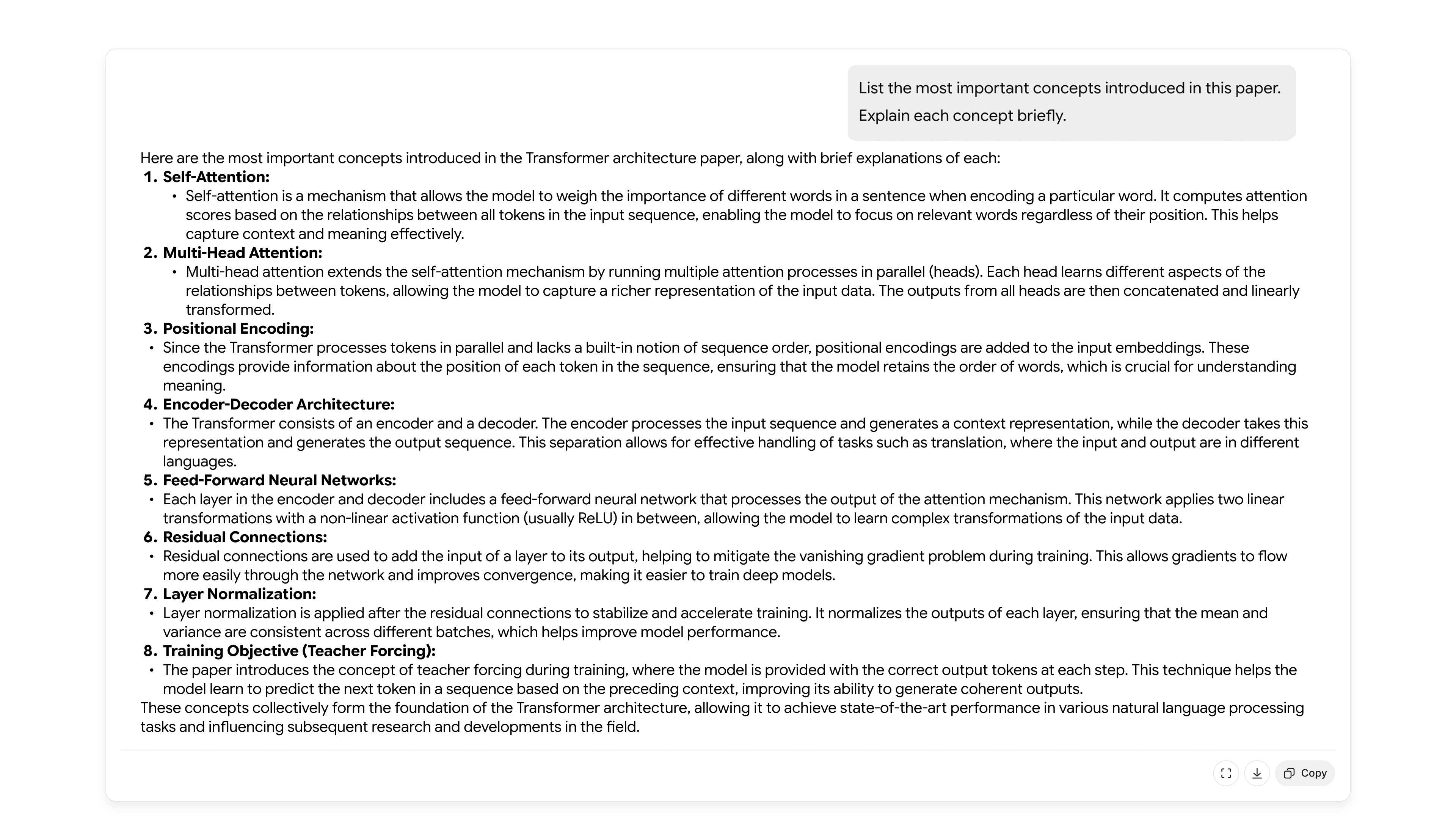

Next, we asked the tool to identify and explain the key concepts in the paper. We wanted to extract the building blocks a reader would need to understand how the architecture actually works.

Prompt:

List the most important concepts introduced in this paper. Explain each concept briefly.

What Ask AI returned:

The tool correctly identified the right concepts. Not every term that appears in the paper, but the ones that are structurally important to understanding the whole.

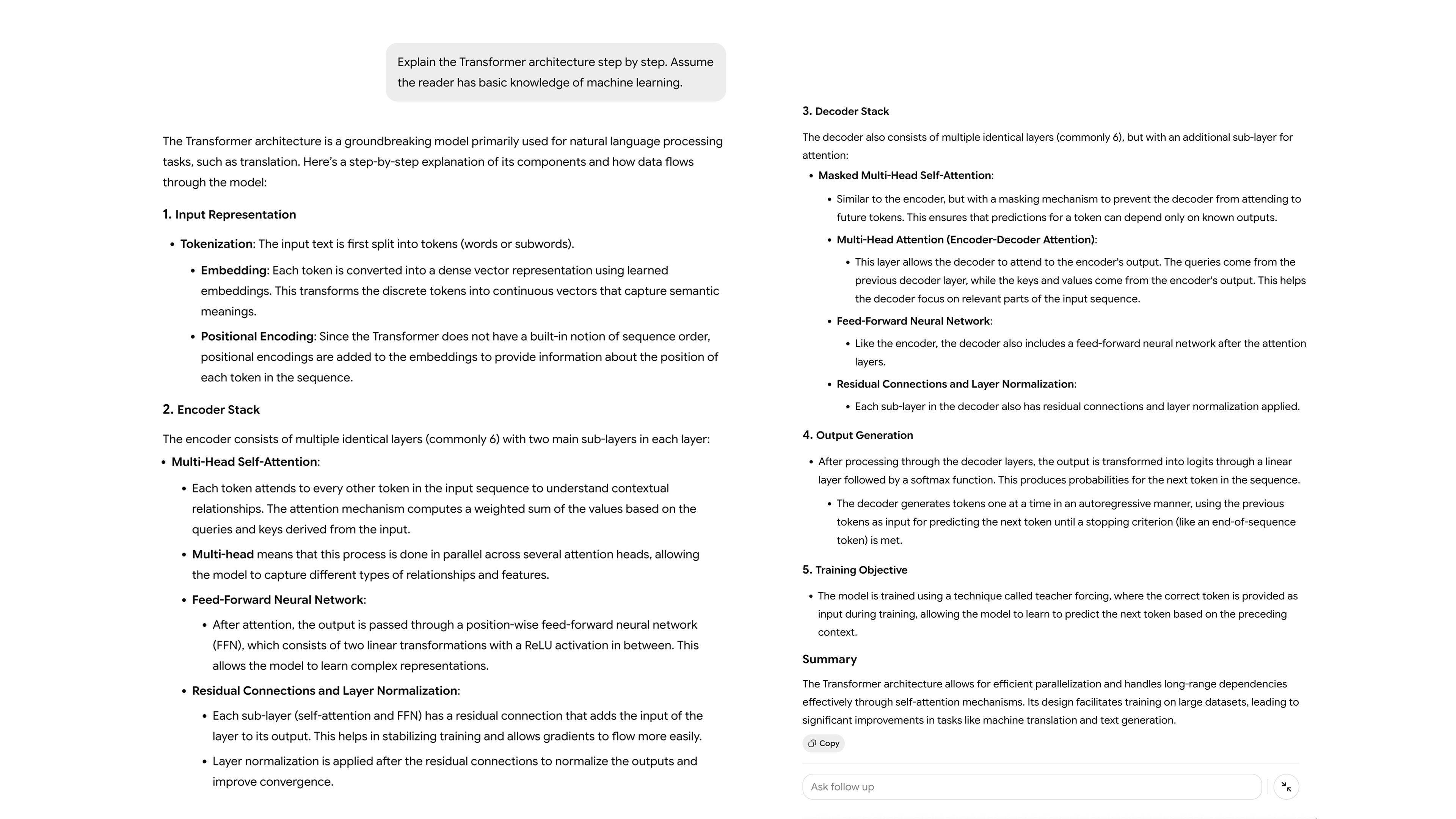

Fourth Question

With the key concepts identified, we asked for a step-by-step architectural breakdown. The kind of explanation that would typically require a dedicated lecture or a textbook chapter.

Prompt:

Explain the Transformer architecture step by step. Assume the reader has basic knowledge of machine learning.

What Ask AI returned:

This is one of the most technically demanding prompts in the experiment, and the response demonstrates what separates a document-aware Ask AI tool from a general chatbot.

A general chatbot would answer this from training data and provide a generic description of the Transformer. A document-aware Ask AI tool answers it from this specific paper.

For a student or practitioner trying to understand not just what the Transformer is, but how these authors built and described it, that distinction is significant.

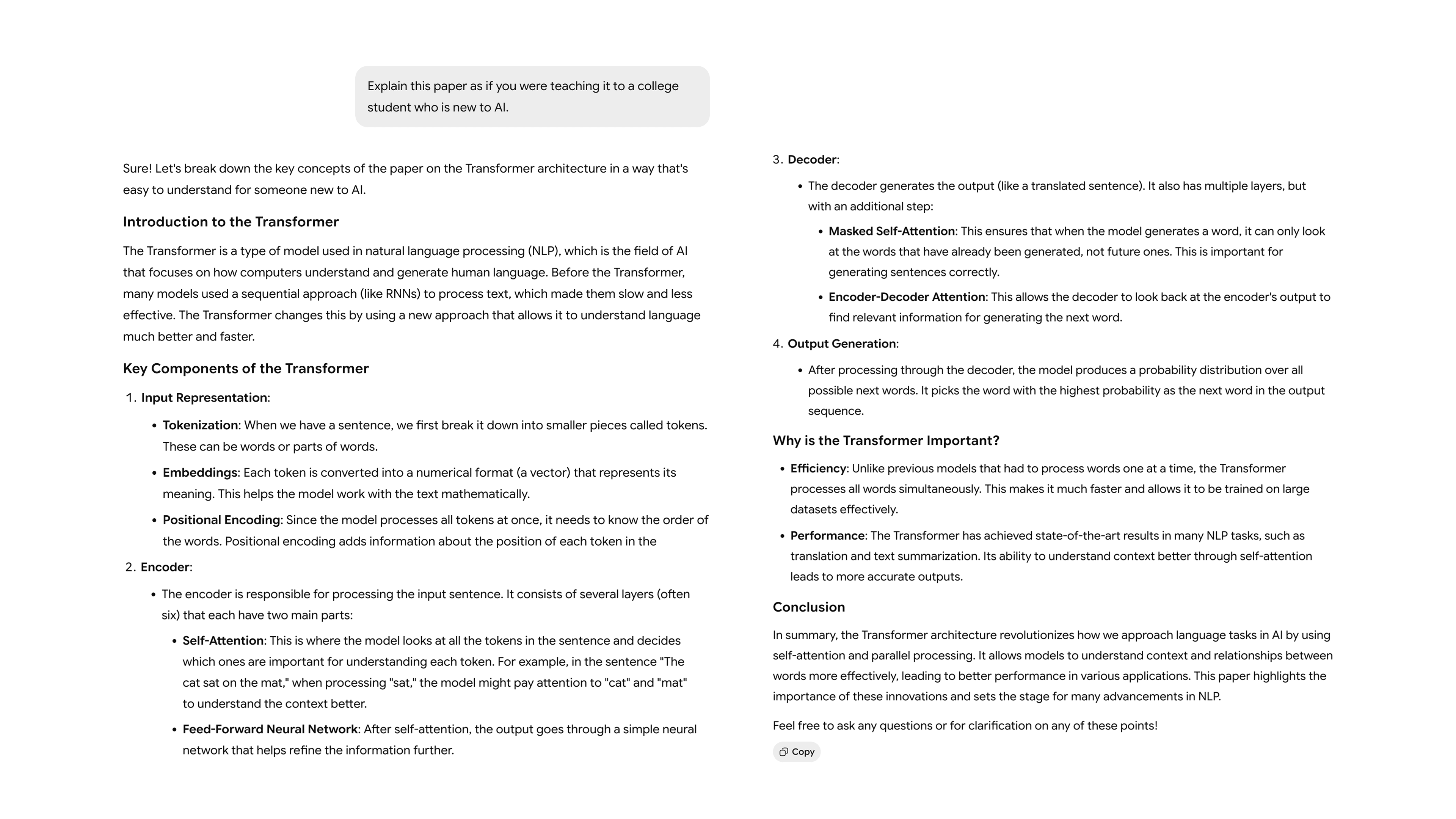

Fifth Question

Halfway through the experiment, we shifted our approach entirely. Instead of asking for technical depth, we asked the tool to bring it all the way down. The way a good professor would for a first-year student.

Prompt:

Explain this paper as if you were teaching it to a college student who is new to AI.

What Ask AI returned:

This response illustrates one of the most practically useful things about Ask AI: the ability to adjust the complexity of an explanation without changing the source document.

You upload once. Then you query at whatever depth you need. The same paper can produce a beginner's overview, a technical breakdown, and an expert-level critique.

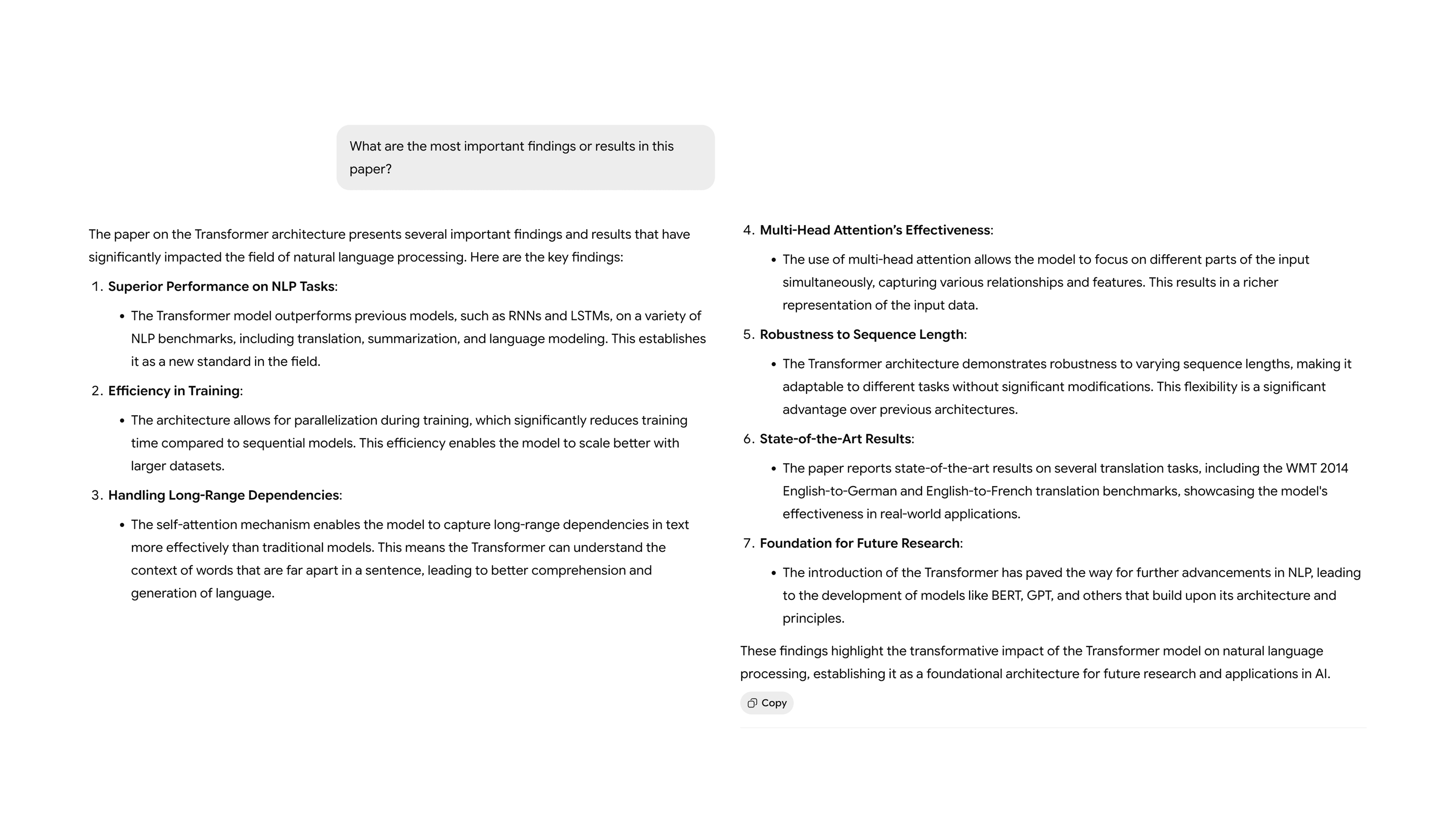

Sixth Question

At this point we moved past explanation and into evidence. What did the authors actually prove?

Prompt:

What are the most important findings or results in this paper?

What Ask AI returned:

Research papers live and die by their empirical results. The abstract can claim anything but the experiments section is where claims get tested.

Ask AI correctly redirected its response to the results section of the paper and surfaced the specific benchmarks and performance comparisons the authors used to support their argument. For anyone reviewing research quickly, this kind of targeted extraction is exactly what's needed.

Seventh Question

With the findings in hand, we zoomed out and asked about real-world impact.

Prompt:

What impact did this research paper have on the field of AI?

What Ask AI returned:

This question asks the tool to do something genuinely interesting: connect a specific document to a broader historical context.

The ability to synthesize that connection and grounding the paper's claims in their downstream consequences is what makes this kind of Ask AI interaction feel like a conversation with an expert rather than a query to a database.

Eighth Question

This is the section most summaries skip. We didn't.

Prompt:

What limitations or weaknesses does this paper have?

What Ask AI returned:

Critical analysis is a harder ask than explanation. It requires the tool to move from describing what the paper says to evaluating what it doesn't address, which requires reading against the grain.

The fact that Ask AI can surface this kind of analysis from the document itself is what builds real trust in the tool. It's the paper's own acknowledged limitations, extracted and presented clearly.

Ninth Question

Sometimes you just need the fastest possible version of the truth.

Prompt:

Summarize the entire paper in 10 bullet points.

What Ask AI returned:

Speed matters. Not every use case requires a deep exploration. Sometimes a researcher needs a quick orientation before deciding whether a paper deserves a full read. Sometimes a professional needs to brief a team on findings without spending two hours in the source material.

Tenth Question

This was our favorite prompt of the experiment.

Prompt:

Explain this research paper at three levels: 1. For a beginner, 2. For a computer science student, 3. For an AI researcher.

What Ask AI returned:

What this response reveals is something important about how Ask AI tools work at their best. Their ability to translate that information for different audiences.

The shift between the three levels shouldn't just be vocabulary. It should be a shift in the type of explanation:

- analogy for the beginner

- mechanism for the student

- theoretical framing for the researcher

If the response you got reflects that kind of calibrated shift, that's a sign of a genuinely capable Ask AI tool.

Eleventh Question

We closed with the simplest, hardest question of the experiment.

Prompt:

If someone only remembers one thing from this paper, what should it be?

What Ask AI returned:

This final response is a useful test of the tool's ability to distill. After 10 questions and a full exploration of a complex paper, the AI needed to make a judgment call: of everything discussed, what is the single most consequential idea?

The answer to this question should be the natural conclusion of the experiment.

What This Experiment Tells Us About Ask AI

We ran eleven prompts on a single research paper. In under thirty minutes, we moved from a basic overview to a full architectural breakdown, a critical analysis, a multi-level explanation, and a one-sentence distillation.

None of this required any prior knowledge of machine learning. It required knowing what questions to ask.

Some Ask AI platforms are built specifically for this kind of document interaction.

Tools like Chatly Ask AI allow users to upload research papers, reports, PDFs, and other documents, then ask follow-up questions in a conversational interface. The tool reads the document, understands its structure, and responds to questions in context, at whatever depth the user needs.

Try It Yourself

The prompts we used in this experiment aren't proprietary. They're a starting point.

Upload a document you've been putting off reading. Ask it what the core argument is. Ask it to explain the methodology. Ask what the authors got wrong. Ask it to summarize everything in concise points and then explain the most important one in depth.

The complexity of research papers might never change. But the way you can interact with them can.

Upload a document and see how AI answers your questions. Ask AI anything about your files, and start reading smarter.

Frequently Asked Question

Still got questions? Here is some additional information for you to better nderstand Ask AI.

More topics you may like

10 Different Ways You Can Use Chatly AI Chat and Search Every Day

Faisal Saeed

11 Best ChatGPT Alternatives in 2026 (Tested, Compared & Priced)

Muhammad Bin Habib

28 Best AI Tools for Students in 2025 – The Complete AI-Powered Academic Success Guide

Muhammad Bin Habib

50+ Ready-to-Copy, Battle-Tested System Prompts That Actually Work in 2025

Muhammad Bin Habib

9 Best AI Image Generation Models for Your Every Need

Faisal Saeed