Xiaomi Open-Sources MiMo-V2.5-Pro — Frontier Coding Performance at a Fraction of the Price

Xiaomi just released and open-sourced MiMo-V2.5-Pro, its most capable model to date. A phone company, the same one that makes budget smartphones and electric scooters, has now shipped a trillion-parameter AI model that matches frontier coding benchmarks at a fraction of the cost of Claude Opus 4.7 and GPT-5.5. The weights are public. The API is live. And Xiaomi says the next generation is already in training.

What Xiaomi Just Launched

MiMo-V2.5-Pro is a 1.02 trillion-parameter Mixture-of-Experts model with 42 billion active parameters per pass. It runs on a hybrid-attention architecture with a 1 million token context window as standard. The big change from the previous generation is consolidation. V2-Pro handled text and code. V2-Omni handled multimodal tasks but at lower benchmark scores. V2.5-Pro collapses both into one model: image, audio, video, and text in a single architecture, at higher benchmarks across every dimension.

Alongside V2.5-Pro, Xiaomi released MiMo-V2.5: a lighter, faster version at 100 to 150 tokens per second for everyday use. V2.5-Pro runs at 60 to 80 tokens per second for complex, long-horizon work.

What It Can Actually Do

The headline capability is long-horizon agentic work. In internal testing, V2.5-Pro completed tasks spanning more than 1,000 tool calls in a single session, web search, code execution, file I/O, and API calls — without losing track of the original objective.

Two real demos from the launch tell that story better than any benchmark:

First, V2.5-Pro built a working RISC-V compiler from scratch. It scaffolded the full pipeline first, perfected the Koopa IR layer (110 out of 110 tests), then the RISC-V backend (103 out of 103), then performance. The first compile passed 137 out of 233 tests, a 59% cold start, which means the architecture was designed correctly before a single test ran. When a regression appeared at turn 512, the model diagnosed the failure, recovered, and continued. Total time: 11.5 hours of autonomous work.

Second, V2.5-Pro built a fully functional desktop video editor from a brief description. Multi-track timeline, clip trimming, cross-fades, audio mixing, and an export pipeline. The final build was 8,192 lines of code produced over 1,868 tool calls. No human wrote a line.

For everyday use, the multimodal capability is just as practical. Upload a photo of your fridge and ask it to suggest dinner. Drop in a video tutorial and get a step-by-step summary. Record a meeting and get action items pulled out automatically. All in one model, no switching between tools.

The Efficiency Story Is the Real News

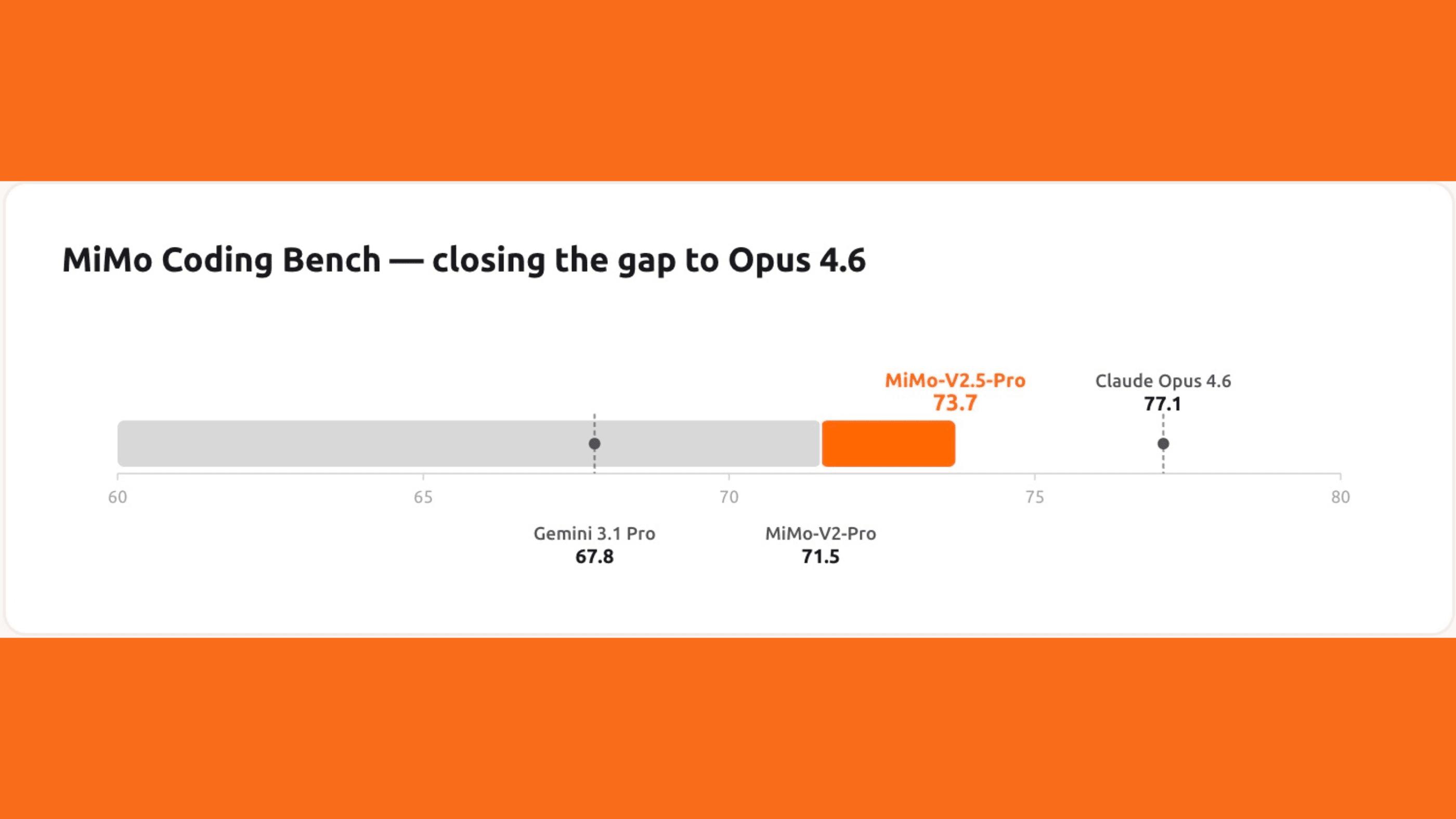

On ClawEval, V2.5-Pro scores 64% Pass^3 using around 70,000 tokens per trajectory. Claude Opus 4.7, Gemini 3.1 Pro, and GPT-5.4 all need 40 to 60% more tokens to reach comparable capability levels.

V2.5-Pro costs $1.00 per million input tokens and $3.00 per million output tokens. Claude Opus 4.7 costs $5 input and $25 output. GPT-5.5 costs $5 input and $30 output. MiMo-V2.5 (the base version) costs $0.40 input and $2.00 output. That is not a rounding difference. That is a structural cost advantage.

Xiaomi also removed the additional charge for using the full 1 million token context window. Every user who purchased a Token Plan before April 21, 2026 also had their credit balance reset on launch day.

Open-Source, API Live, AI Studio Incoming

MiMo-V2.5-Pro is fully open-sourced under a permissive license, available on Hugging Face now. The API is live today via the MiMo API platform, developers can switch to it by updating to mimo-v2.5-pro or mimo-v2.5. Both models are compatible with OpenAI-style API patterns and work with popular agentic scaffolds including Claude Code, OpenCode, and Kilo.

AI Studio access is rolling out and was limited at launch. For most developers, the API is where this gets used anyway.

Xiaomi confirmed the next generation is already in training, focused on deeper reasoning, tighter tool integration, and richer real-world grounding.

The Bigger Picture

The MiMo division is led by Luo Fuli, a former core contributor at DeepSeek who worked on the R1 and V-series models. The pace suggests the budget is not sitting in a bank account.

If you want to run your own tests while MiMo-V2.5-Pro is on your radar, Chatly gives you access to Claude Sonnet 4.6, Grok, and 30+ other models from one workspace — no separate accounts needed.

Read More

- DeepSeek Launches V4 — Open-Source Model That Rivals the World's Best Closed AI

- OpenAI Drops GPT-5.5 — The Most Capable Model in the Room Is Live Now

- OpenAI Introduces Workspace Agents in ChatGPT — Build AI Automations Without Writing a Single Line of Code

- Moonshot AI Open-Sources Kimi K2.6 — A Coding Model That Runs Autonomously for Days

- OpenAI Launches ChatGPT Images 2.0 — The Image Model That Thinks Before It Draws

Frequently Asked Questions

Is Xiaomi MiMo-V2.5-Pro really worth the hype?

More topics you may like

10 Different Ways You Can Use Chatly AI Chat and Search Every Day

Faisal Saeed

15 Best System Prompts for Claude Opus 4.7 – Coding, Writing & Research

Faisal Saeed

24/7 Customer Support with AI Chat: Benefits, Examples and More

Muhammad Bin Habib

28 Best AI Tools for Students in 2025 – The Complete AI-Powered Academic Success Guide

Muhammad Bin Habib

21 Journaling Techniques That Actually Work in 2025

Muhammad Bin Habib